Your AI assistant confidently gives wrong answers. Your teams spend hours hunting down outdated policies. Your LLM has no idea your company even exists. Sound familiar? It’s time to talk about Retrieval-Augmented Generation.

There’s a moment that nearly every enterprise AI lead knows intimately. You’ve just finished your big internal rollout — the chatbot is live, the executives are excited, the Slack channels are buzzing. Then someone asks it a perfectly reasonable question about your company’s Q3 travel reimbursement policy, and the system confidently produces an answer that hasn’t been true since 2021.

That moment of deflation is increasingly becoming the catalyst for a very important architectural conversation: the conversation about Retrieval-Augmented Generation, or RAG.

RAG is not a new concept — it was introduced in a landmark 2020 paper from Meta AI — but enterprise adoption has accelerated sharply in the last two years as organizations move from AI experimentation to AI deployment. And with that acceleration has come a clearer picture of which companies genuinely need it, which ones think they need it and don’t, and which ones desperately need it and have no idea.

To get a sharper read on the market, I sat down with Dr. Priya Nair, Head of Enterprise AI Architecture at Meridian Systems, a firm that has spent the last three years helping Fortune 500 companies navigate knowledge management in the age of large language models. Her take was pointed:

“Most organizations I walk into are dealing with some version of the same five problems. And RAG solves all of them — when it’s implemented right.” — Dr. Priya Nair, Head of Enterprise AI Architecture, Meridian Systems

So what are the telltale signs? Here are seven of the most significant — drawn from interviews with enterprise architects, AI product leads, and CIOs across industries from financial services to healthcare to logistics.

Sign #1: Your AI Confidently Makes Things Up About Your Own Business

Hallucinations about internal context — When your LLM produces confident, fluent, and completely wrong answers about your own products, policies, pricing, or people — that’s the clearest possible signal that the model has no grounded access to your organizational knowledge.

This is the most obvious sign, and yet it continues to catch organizations off guard. Large language models are trained on vast corpora of public internet data. They know about general software engineering, global history, basic chemistry. What they decidedly do not know is that your company updated its parental leave policy in October, that your flagship product now has a new SKU, or that the person listed as your CFO on a 2022 press release left the company 18 months ago.

When the model doesn’t know something, it doesn’t say “I don’t know.” It infers. It fills in the blanks with the best approximation it can construct from adjacent patterns in its training data. The result looks authoritative, reads fluently, and is often subtly or catastrophically wrong.

RAG solves this by anchoring responses to a retrieval step. Before generating an answer, the system first searches your company’s actual documents, databases, wikis, and policies — and grounds the response in what it actually finds. The model still handles language generation, but the facts come from your source of truth, not from statistical inference.

Sign #2: Your Knowledge Base Is a Graveyard of Outdated Documents

Stale documentation problem — If your teams routinely discover that the “official” version of a document is months or years out of date — or worse, have stopped trusting the knowledge base entirely — static AI deployments are only going to amplify this problem.

Here’s a paradox that plagues nearly every large organization: the more valuable a document is, the more frequently it changes, and therefore the more likely the version being circulated is out of date. Pricing decks. Compliance policies. Onboarding guides. Technical runbooks. These are exactly the documents employees need most, and exactly the ones that become stale fastest.

Fine-tuned models — an alternative approach where you bake company knowledge directly into the model’s weights through additional training — are fundamentally unsuited to this dynamic. Re-training a model every time a policy changes is prohibitively expensive, slow, and technically complex. Even with the best MLOps pipelines, you’re typically looking at weeks of lag between a document update and a model that reflects it.

RAG pipelines, by contrast, can ingest document updates in near-real time. Update the source document, re-index the vector store, and the next query reflects the change. For compliance-heavy industries like finance, insurance, and healthcare, this is not a nice-to-have — it’s an operational necessity.

Sign #3: Different Teams Are Using Different Sources of Truth

Knowledge fragmentation across the org — Sales is working off one version of the product spec. Engineering is working off another. Customer support is winging it. When organizational knowledge is fragmented, an AI layer doesn’t fix the problem — it scales it.

Knowledge fragmentation is arguably the silent killer of enterprise productivity, and it’s one of the most underappreciated signals that RAG infrastructure is overdue. When different functions within the same company are operating from different versions of “the truth,” the AI assistant you deploy will faithfully replicate whichever fragment it happens to have access to.

Dr. Nair describes this as one of the most common scenarios she encounters: “I’ll go into a large enterprise and ask five people the same question about their product. I’ll get five different answers — not because anyone is lying, but because there are five different document repositories that each think they’re canonical. When you deploy an LLM on top of that, you just get a very articulate sixth answer.”

Interview: Dr. Priya Nair, Head of Enterprise AI Architecture, Meridian Systems

Me: When you walk into an enterprise that’s struggling with knowledge fragmentation, how do you typically frame the RAG conversation?

Dr. Nair: I start by asking them one question: “If I asked your AI assistant where to find your most current product pricing, what would it say?” Most of the time, they either don’t know, or they’ve already been burned by this exact scenario. That tends to make the conversation very concrete, very quickly. RAG isn’t an AI upgrade — it’s a knowledge plumbing project that your AI makes necessary.

Me: What’s the biggest misconception companies bring into these conversations?

Dr. Nair: That RAG is a single product you buy and plug in. It’s not. It’s an architectural pattern — a pipeline that involves ingestion, chunking, embedding, retrieval, re-ranking, and then generation. Each of those stages has design decisions baked in, and those decisions affect quality enormously. You can implement RAG badly, and plenty of companies do. The ones that do it well invest time in evaluating retrieval quality, not just generation quality.

Me: For enterprises weighing RAG against just building a better traditional knowledge base, how do you help them think through that?

Dr. Nair: That’s actually a really important question to get right before you start building. It’s worth reading frameworks like the one outlined in “Internal Knowledge Base vs RAG: Choosing the Right Enterprise Knowledge Management Solution” — that piece does a good job laying out how each approach handles scale, update frequency, and query complexity differently. In short: if your users are doing structured lookups with predictable queries, a well-organized knowledge base can absolutely be sufficient. But if they’re asking nuanced cross-domain questions in natural language, and your document corpus is large and dynamic, you need RAG.

Sign #4: Your Employees Spend More Time Finding Information Than Using It

High information retrieval friction — If internal research — finding the right policy, the right precedent, the right specification — consumes a disproportionate share of your team’s working hours, that’s a retrieval problem, and RAG is fundamentally a retrieval solution.

McKinsey’s research on knowledge worker productivity has long identified “searching for information” as one of the top time sinks in enterprise environments — consuming anywhere from 15% to 35% of working hours depending on the function.

Those numbers haven’t improved meaningfully in years, because the underlying problem isn’t access to information — it’s access to the right information, in the right context, at the right moment.

Traditional search — keyword-based, metadata-dependent — is remarkably poor at handling the way people actually ask questions. Employees don’t type reimbursement_policy_v4_FINAL.pdf into a search bar. They type "Can I expense a hotel stay if my flight was cancelled?" RAG systems are architecturally designed to bridge exactly this gap, using dense vector embeddings to match semantic intent rather than lexical overlap.

The productivity gains in well-implemented RAG deployments can be significant. Several companies in the legal, consulting, and technical support sectors have reported 40–60% reductions in time-to-answer for complex internal queries after RAG deployment — with the added benefit that the answers are sourced, auditable, and traceable back to specific documents.

Sign #5: You’re Operating in a Regulated or Compliance-Sensitive Environment

Compliance and auditability requirements — In regulated industries — finance, healthcare, legal, pharma — the ability to cite a source for every AI-generated assertion isn’t a UX feature. It’s a legal requirement. RAG’s retrieved-context architecture makes this possible in a way that pure generation simply cannot.

If your industry requires that AI-assisted outputs be traceable to a specific document, policy version, or data source, then an LLM generating responses purely from its parametric memory is not just risky — in some regulatory frameworks, it may not be permissible at all.

RAG’s architecture is inherently source-aware. The context retrieved to ground a response is a first-class artifact in the pipeline — it can be logged, surfaced to the user as a citation, retained for audit trails, and compared against the document version in use at the time of the query.

This is why RAG has become essentially table stakes in enterprise deployments within financial services and healthcare, where regulators are increasingly scrutinizing AI-assisted decision-making.

“Citability is one of the most underappreciated properties of RAG,” Dr. Nair told me. “When a healthcare system deploys a clinical assistant, every answer needs to point back to a clinical guideline with a version number and a date. RAG makes that natural. A pure generation model makes it essentially impossible.”

Sign #6: Your AI Deployment Is Stuck at the Pilot Stage

The “permanent pilot” problem — If your AI tool has been “in pilot” for more than six months and executives keep citing “accuracy concerns” as the reason it hasn’t scaled, you’re almost certainly dealing with a grounding problem — not a model quality problem.

The “permanent pilot” is one of the most frustrating and common failure modes in enterprise AI.

A company deploys a chatbot or AI assistant, users find it impressive in demos, and then it quietly fails to scale because the real-world queries it receives expose a foundational flaw: the model doesn’t know enough about the organization it’s supposed to serve.

Organizations in this situation often respond by trying to write better prompts, switching model providers, or investing in UX improvements. These are the wrong levers. The underlying problem is that the model has no access to the institutional knowledge it needs to be useful at enterprise scale. That’s a retrieval infrastructure problem, and prompt engineering won’t fix it.

If the barrier to broader deployment of your AI tools is consistently described as “it gets things wrong too often” or “employees don’t trust it,” that is almost always a RAG conversation waiting to happen.

Sign #7: Your Competitors Have Deployed AI Assistants That Actually Work

Competitive displacement risk — When customers, partners, or candidates start commenting that another company’s AI tools are significantly better at providing accurate, contextual, up-to-date answers — the architectural gap driving that difference is almost certainly RAG.

This sign is perhaps the most uncomfortable one to acknowledge, but in 2026 it has become increasingly visible. The gap between organizations that have invested in RAG infrastructure and those that haven’t is no longer a gap in AI ambition — it’s a gap in AI usefulness. And usefulness is starting to show up in customer experience, employee productivity, and talent attraction in measurable ways.

Sales teams at companies with well-implemented RAG-backed tools can answer complex, context-specific customer questions in real time. Customer support organizations with RAG pipelines resolve tickets faster and with higher first-contact resolution rates. Onboarding programs that leverage RAG to give new employees instant access to institutional knowledge reduce time-to-productivity significantly.

The companies building these capabilities aren’t primarily doing so because of enthusiasm for the technology. They’re doing it because they identified specific signs — many of the ones described in this article — and responded before those signs became crises.

So You’ve Identified the Signs — What’s Next?

Recognizing that your organization needs RAG is the straightforward part. Building it well is considerably more involved. The most common mistake organizations make is treating RAG as a product purchase rather than an architectural investment. There are excellent managed RAG platforms — from the major cloud providers as well as specialized vendors — but even these require careful decisions about document ingestion strategy, chunking methodology, embedding model selection, retrieval scoring, and evaluation frameworks.

Before committing to a RAG build or buy decision, it’s worth getting clear on a prior question: is RAG actually the right solution for your specific knowledge management challenge?

A well-structured internal knowledge base may be sufficient for teams with narrow, predictable information needs. RAG becomes clearly superior when document volume is large, query diversity is high, or content freshness is critical.

For a detailed framework to navigate this decision, the comparative analysis in “Internal Knowledge Base vs RAG: Choosing the Right Enterprise Knowledge Management Solution” provides one of the more rigorous breakdowns available on how the two approaches differ across dimensions of scale, governance, cost, and query complexity. It’s a useful reference before your architecture team gets locked into any particular direction.

Dr. Nair’s closing advice was characteristically direct: “Don’t let the ‘AI strategy’ conversation distract you from the knowledge management conversation. They’re the same conversation. If your people can’t find the right information quickly and trust that it’s accurate, no AI layer is going to fix that. RAG is how you fix it — but it’s the fixing of the knowledge problem that matters, not the AI wrapping around it.”

The signs are rarely subtle. If you recognize your organization in several of the scenarios described here, the question isn’t really whether you need RAG. It’s how much longer you can afford to wait.

POSTS ACROSS THE NETWORK

Best SEO API in 2026: SE Ranking, Ahrefs, Semrush & More Compared

10 Tools That Help You Control How Search Engines See Your Website

Obsidian, Supercharged: The AI Revolution in Personal Knowledge Management

The LLM Reliability Paradox: Agents Aren’t Broken, Your Architecture Is

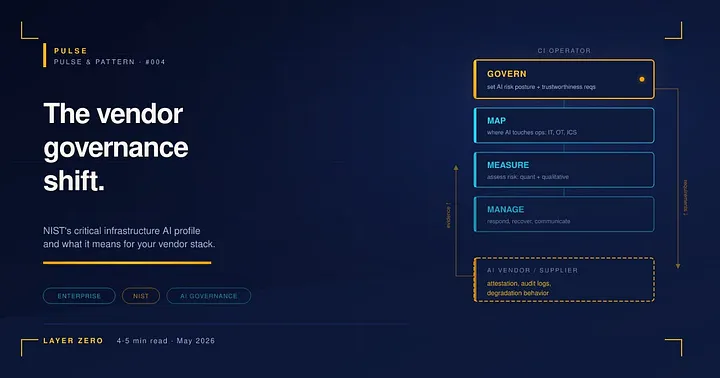

NIST’s critical infrastructure AI profile and the vendor governance shift