AI is Recreating the Conditions That Made Google’s 20% Time Groundbreaking

AI is compressing knowledge work faster than organizations can redesign themselves around it. For most knowledge workers, the actual experience of this moment isn’t liberation — it’s a faster treadmill with more options you don’t have time to explore. CEOs are making public predictions about three-day work weeks. Productivity tools are collapsing what used to take days into hours. And yet the gap between what AI makes possible and what organizational life makes permissible keeps widening.

Most companies are scheduling it away.

I keep finding this in my own work: AI side projects that are clearly worth pursuing, clearly adjacent to my role, and clearly not going to get calendar time without a structural reason to protect them. That gap — between what’s possible to explore and what’s permissible to pursue — is where the next several years of competitive advantage will be won or lost.

Which is why I keep coming back to Google’s 20% Time.

Not because I think the answer is to dust off a 2004 Silicon Valley policy and reapply it. But because the problem it was trying to solve is exactly the problem we’re sitting inside right now — and the way it failed is exactly the way any modern equivalent will fail if we’re not honest about the mechanism.

Let’s start with the name, because the name matters.

It Was Called 20% Time. And That’s Not a Minor Correction.

The program is often referred to informally as the “80/20 rule” or the “80/20 program” — as though it were a prioritization framework rather than a specific cultural practice. The actual name was 20% Time, and that framing is the point. You weren’t managing your work in an 80/20 ratio. You were getting a fifth of your week to pursue something you believed in. The difference between a prioritization heuristic and a structural time allocation is enormous, and the confusion between them has followed every subsequent conversation about reviving the program.

The policy appeared publicly, in writing, in Google’s 2004 IPO prospectus: “We encourage our employees… to spend 20% of their time working on what they think will most benefit Google.” That sentence is remarkable for what it doesn’t say. It doesn’t say “approved by your manager.” It doesn’t say “aligned with your team’s OKRs.” It says what they think will most benefit Google — trusting individual engineers to be both motivated and calibrated enough to make that call.

Gmail came from 20% Time. Paul Buchheit started building it in 2001, three years before it launched. Google News came from 20% Time — Krishna Bharat built the first version after 9/11 as a way to automatically aggregate news about the attacks. Google Suggest (the autocomplete feature you use every time you type into a search bar) started as a 20% project by Kevin Gibbs. AdSense, which became one of Google’s most profitable products, traces at least partial lineage to 20% exploration.

That list is real. The mythology around it, though, has grown into something the facts don’t quite support — and that gap is where the useful lessons live.

What 20% Time Actually Did (vs. What It Gets Credit For)

Here is the part that tends to get uncomfortable: none of those products launched because of 20% Time. They launched because exceptional engineers became so convinced of their projects that they eventually got dedicated teams, real compute resources, and full organizational commitment.

20% Time didn’t build Gmail. 20% Time built the conditions under which Paul Buchheit could convince himself and then others that Gmail was worth building. Those are meaningfully different things. The program addressed the idea origination problem — the systematic underinvestment in speculative exploration that large organizations almost universally suffer. It did not, and could not, address the execution problem. Those products needed full engineering teams. They needed infrastructure. They needed someone to go to Larry Page and say “this is real.”

Conflating the origination space with the full product lifecycle has caused two decades of confused conversation about what creative freedom programs actually do. Organizations that tried to replicate the program often did so expecting finished products. What they got was a lot of interesting prototypes and frustrated engineers.

Understanding that distinction isn’t a criticism of 20% Time. It’s actually the case for it — if you understand what the program was doing correctly. The question for any modern equivalent isn’t “will this produce the next Gmail?” It’s “are we systematically starving the stage of work where people figure out that Gmail is worth imagining?”

Why It Ended (And Why Nobody Announced It)

The program didn’t end with a memo. That’s the tell.

By 2013, multiple reports — from Business Insider, from former Googlers, from Marissa Mayer herself in earlier comments — documented that 20% Time had become largely nominal. Employees still believed in it as a cultural artifact, but the actual practice had eroded. Former Google X founder Sebastian Thrun confirmed the shift. The reasons compound:

Google scaled from a few hundred engineers to tens of thousands. At small scale, a single engineer’s 20% project could become a product in months because the organization was flat enough for it to find a path to launch. At large scale, every 20% project had to fight for resources, visibility, and organizational alignment in a system that was optimized for delivering against roadmaps, not discovering new ones.

Marissa Mayer captured the execution problem precisely when she noted the “120% phenomenon” — in practice, 20% Time came on top of full performance expectations, not instead of 20% of them. Engineers who visibly took 20% time risked appearing less productive than peers who appeared to operate at 100% all the time. The cultural signal that “we value exploration” was undermined by the performance signal that “we reward throughput.” Both signals were real. The performance signal was louder.

Finally, there was the alignment problem. As Google’s portfolio expanded, the gap between “what an individual engineer finds interesting” and “what Google’s business priorities are” widened. A 20% project on an AI feature that might have seemed speculative in 2006 would look either like strategic genius or dangerous distraction in 2012 depending entirely on who reviewed it. The program needed management protection; it was never formally given, and at scale, that informality became a liability.

The lesson isn’t that 20% Time was naive. It’s that it was structurally unsupported — a cultural commitment without the organizational architecture to survive growth.

The AI Moment Is Recreating the Original Problem

Here’s why this history is suddenly relevant again.

AI tools have done something specific and underappreciated: they have dramatically compressed the prototype cost of side projects. What used to take two weeks of evening hours — building a rough proof-of-concept, testing an integration, standing up a demo — can now be scaffolded in an afternoon with Claude or Copilot. The minimum viable experiment has never been cheaper to run.

At the same time, AI is generating exactly the kind of opportunity surface that 20% Time was designed to explore. The number of adjacent-to-your-job AI applications that a thoughtful practitioner can identify in any given week has exploded. I experience this directly: there are AI workflow experiments I’d love to run, integrations I suspect would be genuinely valuable, tools I want to properly evaluate — and none of them are in my priority stack because they’re not assigned work. They exist in the gap between what my role requires and what I can see AI enabling.

That gap is the original 20% Time problem, reconstructed at scale by a technology that moves faster than any organizational planning process.

The CEOs talking about three-day work weeks are gesturing at one version of this: if AI compresses knowledge work, what do we do with the freed capacity? But that framing puts the question entirely in the employer’s hands. The more pointed version is: if AI is already compressing task time for individual practitioners, where is that capacity going?

The honest answer, historically, is that productivity gains from technology don’t tend to flow back to workers as exploration time. They flow back to employers as throughput. The personal computer didn’t produce a thirty-hour work week. Email didn’t either. Smartphones shrank the boundary between work and non-work, but not in the direction of more leisure. There is no structural reason to expect AI to behave differently — unless something structurally changes.

The New Barrier Isn’t Time. It’s Everything Else.

If an organization decided tomorrow to reinstate 20% Time for its knowledge workers, it would solve the wrong problem.

Time is not the primary constraint on meaningful AI exploration in 2025. The constraints have migrated:

Compute and infrastructure. A meaningful AI project — the kind that could actually demonstrate value and scale — requires API access, storage, deployment infrastructure, and in many cases significant compute for any non-trivial use case. An individual engineer’s 20% of their workday does not come with 20% of the company’s cloud budget. The prototype layer is cheap now; everything above it is expensive and organizationally controlled.

Data access. The most valuable AI applications for any specific organization involve that organization’s data — its customer interactions, its process history, its proprietary knowledge. An individual employee’s 20% time exploration rarely comes with sanctioned access to the data that would make the project genuinely valuable rather than a proof-of-concept on synthetic examples.

Organizational permission. Perhaps the deepest barrier is the one that ended the original program: the informal permission structure. A 20% project that touches customer data, integrates with production systems, or could plausibly compete with or embarrass an existing team needs active organizational protection. Without that, it dies not from executive opposition but from the friction of nobody officially supporting it.

This doesn’t mean the 20% Time idea is obsolete. It means that any serious modern version would need to provide not just time but a structural package: budget, data access, explicit organizational cover, and a clear path from prototype to decision. That is a very different thing to offer than “you can work on whatever you want on Fridays.”

What Honest Revival Looks Like

I’d argue a structurally honest 20% Time equivalent in 2025 has four components that the original lacked:

A defined scope with resources attached. Not “explore anything” — “explore AI applications within [domain] with access to [specific data environment and compute budget].” The original program’s openness was part of its appeal and part of its failure.

Manager accountability, not just cultural permission. The 120% problem happened because managers were never held accountable for protecting the time. A modern version needs to explicitly reduce output expectations for the time allocated — not add exploration on top.

A structured path from prototype to decision. Projects need a predefined review point where the organization commits to either resourcing, archiving, or redirecting the work. The original 20% Time produced an enormous graveyard of prototypes that never got that decision point.

Acknowledgment that the output is learning, not products. Most 20% projects won’t produce Gmail. Most will produce sharper judgment, better-informed prioritization, and practitioners who understand what AI can and can’t do from actual experience rather than vendor decks. That is genuinely valuable and systematically undervalued in how organizations assess exploration programs.

The Informal Version Is Already Running

Here is the thing I keep coming back to: the people doing this informally are already operating their own 20% Time.

Every practitioner who spends their commute testing a new AI tool, builds a weekend workflow automation, or runs an evening experiment on a problem adjacent to their job is making the same bet Buchheit made when he started building Gmail in the margins of his week. The exploration is happening. The question is only whether organizations will design around it or ignore it.

The professionals who will define how AI reshapes their industries aren’t waiting for a policy. They’re already in the gap — the space between what their role requires today and what they can see becoming necessary tomorrow. Some of them are doing it on their own time. Some have found managers who quietly protect the space. A rare few work in organizations that have thought seriously about this.

The rest are scheduling it away.

Google’s 20% Time didn’t fail because the idea was wrong. It failed because the organization never built the architecture to protect it at scale, never resolved the performance signal contradiction, and never clearly defined what success looked like. Those failure modes are entirely replicable. So is the underlying insight.

The question isn’t whether to revive the program. It’s whether we’re serious enough about what comes next to actually build the structure the idea always needed.

What’s the AI side project you keep finding in your priority stack — and what would it take for your organization to treat that finding as signal rather than distraction?

POSTS ACROSS THE NETWORK

How LLM Visibility Is Changing Technical SEO and B2B Search

How Businesses Use Remote Developers to Accelerate Growth

Best FedRAMP Compliance Automation Tools for SaaS

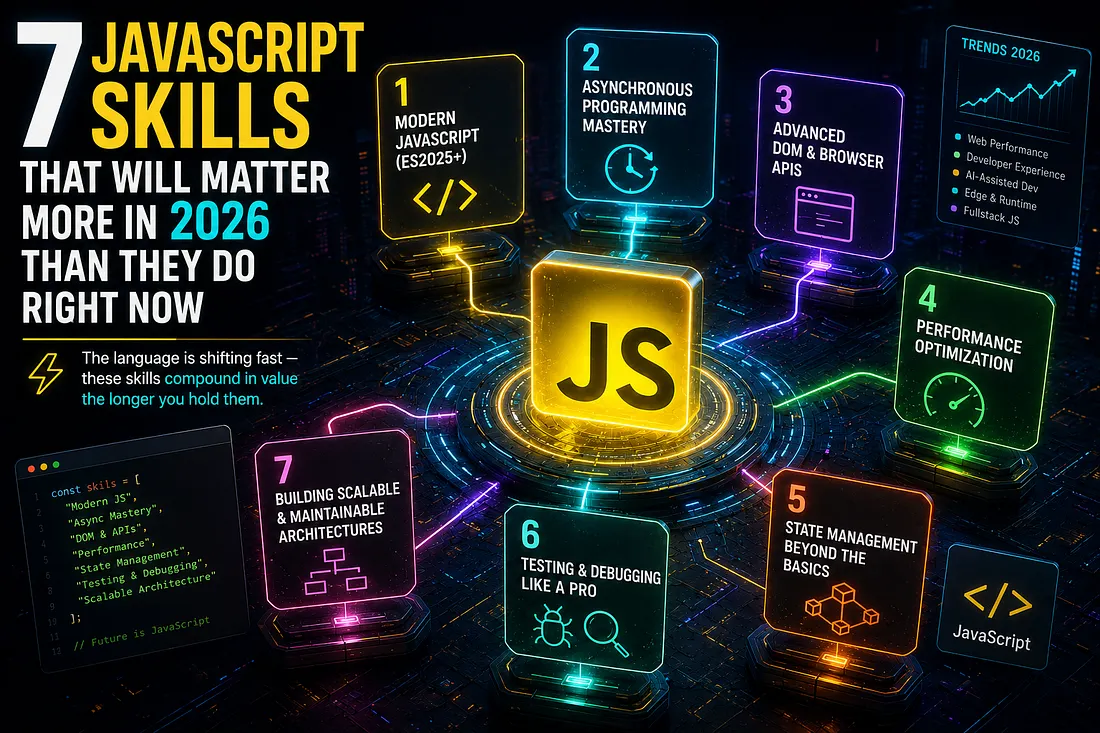

7 JavaScript Skills That Will Matter More in 2026 Than They Do Right Now