Most teams building LLM-powered features in production discover the same thing: the demo works, the pilot looks promising, and then something quietly breaks. A contract analysis tool starts citing clauses that don’t exist. A compliance assistant invents regulatory thresholds. A customer-facing chatbot confidently answers a question with plausible-sounding fiction. These aren’t edge cases you can tune away with a few prompt tweaks. They’re symptoms of a structural problem, and the only way to address them seriously is to treat custom AI solutions as an engineering discipline rather than a product selection exercise.

The gap between “LLM that sometimes hallucinates” and “LLM-powered feature that meets a reliability SLA” is wider than most teams expect when they start. Understanding that gap means thinking about hallucination mitigation not as a single technique but as a tiered architecture decision, where each layer handles a different failure mode and each layer has its own ceiling.

Prompt engineering as a first-line fix

When a model starts producing inaccurate outputs, the first instinct is almost always to fix the prompt. Add constraints. Specify output format. Tell the model to say “I don’t know” when it’s uncertain. This works, sometimes, and it’s worth doing early because it’s cheap and fast.The techniques that actually move the needle at this tier are few and well-understood: chain-of-thought prompting to force reasoning steps before a final answer, few-shot examples that demonstrate the failure mode you’re trying to prevent, and explicit grounding instructions that tell the model to cite specific passages rather than synthesize freely. For low-stakes features with narrow scope, this is often enough.

But prompt engineering has a hard ceiling, and it arrives faster than people expect. The model still has no access to your internal data. It still has a training cutoff. It still treats its parametric memory as ground truth unless you provide contradicting context. You can instruct a model to be careful, but you can’t instruct it to know things it doesn’t know.

“We spent about three months iterating on prompts before we admitted we were just moving the hallucination around, not eliminating it. The model would stop inventing one type of fact and start inventing another.”— Priya Nair, Head of AI Products, Meridian Analytics Group

What retrieval augmented generation actually solves

Retrieval augmented generation is the structural fix that prompt engineering can’t be. The core idea is straightforward: instead of relying on the model’s parametric memory, you retrieve relevant documents at query time and inject them into the context window as grounding material. The model is then answering based on what it can see, not what it thinks it remembers.

If you’re wondering what is a RAG in AI beyond the basic definition, the honest answer is that it’s a retrieval pipeline wired to a generation step. The quality of the output depends heavily on the quality of the retrieval. A well-tuned RAG system with a good vector store and sensible chunking strategy will outperform a poorly tuned one by a large margin, regardless of which LLM sits at the end.For many enterprise use cases, RAG is the right answer. It gives the model ai with access to real time data (or near-real-time, depending on your indexing frequency), it keeps sensitive data out of model training, and it makes outputs auditable because you can trace which retrieved chunks contributed to a given answer. For a legal team that needs to query internal contracts, or a support team that needs to pull from a live knowledge base, RAG solves the problem cleanly.

Where RAG breaks down

RAG isn’t a silver bullet. The failure modes at enterprise scale are predictable once you’ve seen them. Retrieval recall drops when documents are poorly structured, when queries are ambiguous, or when the semantic gap between a user’s question and the way information is stored in your corpus is large. In regulated domains, a retrieval miss isn’t just an inconvenience. It can mean a compliance assistant fails to surface a controlling regulation, or a medical summary tool omits a contraindication.There’s also the context window problem. Even with models supporting very long contexts, retrieval systems that inject too many chunks start to dilute the signal. The model attends to the wrong parts. Precision matters as much as recall, and getting both right under production load requires ongoing work.

Custom grounding architectures for production reliability

When the stakes are high enough, neither prompt engineering nor off-the-shelf RAG is sufficient. This is where custom ai/ml solutions designed around your specific domain, data topology, and accuracy requirements become necessary rather than optional.A custom grounding architecture typically combines several components: a domain-specific retrieval layer with tuned embeddings trained on your corpus, a reranking step that filters retrieved candidates before they reach the LLM, structured data connectors that pull from authoritative sources (databases, APIs, regulatory feeds) rather than relying on document search alone, and a verification layer that checks generated claims against retrieved evidence before surfacing output to users.

The verification layer is where most teams underinvest. It’s also where the most meaningful reliability gains come from. A simple consistency check, comparing key claims in the generated output against the retrieved source material, can catch a large fraction of hallucinations before they reach a user. This doesn’t require a second large model. A smaller, faster classifier or even rule-based extraction can handle many cases.

“The architecture that finally worked for us wasn’t more sophisticated retrieval. It was adding a post-generation verification step that flagged outputs where the model’s claims couldn’t be grounded back to a retrieved passage.”— Tomasz Wielki, Principal Engineer, Colbeck Systems

Handling the limitations of large language models honestly

One thing worth saying plainly: the limitations of large language models are not going away with the next model release. Larger context windows help with some problems. Better instruction following helps with others. But a model trained on data with a cutoff date will still not know what happened after that date. A model with no access to your internal systems will still be unable to reason about your specific data. These are architectural constraints, not bugs.

This matters for how you scope reliability commitments to stakeholders. If your organization is building LLM-powered features in finance, legal, or healthcare, the question isn’t whether the model will ever hallucinate. It will. The question is whether your architecture catches and handles those failures before they propagate into decisions. That framing shifts the conversation from “can we trust the AI” to “what does our verification and fallback logic look like,” which is a more tractable engineering problem.Choosing the right tier for your use case

The tiered approach, prompt engineering first, RAG as a structural fix, custom grounding for production-grade reliability, isn’t a linear path where every team needs to reach tier three. It’s a framework for matching architecture to risk.

A low-stakes internal tool with a narrow domain and forgiving users can often stop at tier one or two. A clinical decision support feature or an automated regulatory filing tool probably can’t. The honest evaluation is: what is the cost of a hallucination in this specific context, and does your current architecture have a credible answer for when it happens?Aimprosoft’s team wrote about this specific tradeoff, including where RAG fits relative to other architectural choices for enterprise AI, in their piece on custom AI solutions and RAG.

The teams that build reliable LLM features in high-stakes domains are not the ones who found a prompt that works. They’re the ones who treated hallucination as a systems problem and built verification into the architecture from the start, before the first production incident made it unavoidable.

POSTS ACROSS THE NETWORK

I Built an AI System That Replaced 6 Hours of Daily Manual Work

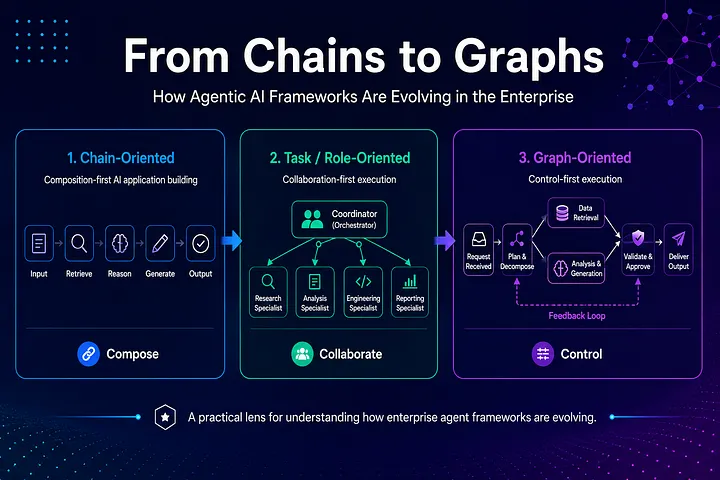

Beyond the Model: The Evolving Architecture of Enterprise AI Agents

The Ways AI and Big Data Work Together for Better Predictions

Why Web3 Continues Growing Beyond Digital Assets