I Tested 5 Free AI Tools as an M.Phil Student — Here’s What Surprised Me

Twelve days.

That’s how long I have until my M.Phil thesis semi-final submission. My research looks at the nephrotoxic effects of Aflatoxin B1 on rat kidneys and the potential curative role of Hydroxygenkawanin against that damage. It’s dense, it’s technical, and the literature is deep enough that you can lose a full day just chasing one citation trail.

So when the date was officially announced, I didn’t panic. I did something more useful: I got systematic about which AI tools were actually worth my time.

Over the past several weeks, I’ve been running five free AI tools through the kind of work that defines M.Phil life in the biomedical sciences. Not demo tasks. Real ones: synthesizing research papers on mycotoxin-induced renal injury, drafting methodology sections, cleaning up my academic writing, and pressure-testing arguments before my supervisor sees them.

Here’s what I found. Honest. No sponsorships. No fluff.

The 5 Tools (and Why I Picked Them)

- Claude (free tier) — long-document feedback and argument building

- ChatGPT (free tier) — drafting and explaining complex concepts

- DeepSeek (free) — literature-heavy research queries

- Perplexity AI (free tier) — fast source discovery

- Grammarly (free tier) — academic writing polish

I’ll be direct about what each one actually did for me.

1. Claude — The One That Felt Like a Real Research Partner

I’ll start here because it’s the one that genuinely changed how I work.

My thesis involves two parallel threads: establishing the nephrotoxic mechanism of AFB1 in rat kidney tissue, and then building the case for Hydroxygenkawanin as a curative intervention. Keeping those threads coherent across multiple chapters while making sure the methodology, results, and discussion sections speak to each other is harder than it sounds when you’re deep inside the work.

I uploaded a full draft chapter to Claude and asked it to tell me where the argument lost coherence. What came back surprised me. It didn’t just summarize what I’d written, it flagged that I’d introduced oxidative stress pathways in the literature review but hadn’t circled back to them in my discussion of the histopathological findings. It was right. I had made that exact error and hadn’t caught it.

Beyond structural feedback, I used Claude for something I didn’t expect to rely on it for: untangling theory. When I needed to explain the hepatotoxic-to-nephrotoxic mechanism of AFB1 in plain language for the background section, which has to be readable by a broader committee. Claude helped me work through the explanation in layers until it was clear without being reductive.

And when I had an outline but couldn’t find the entry point for a section, it helped me find the argument’s spine.

The honest limitation: It doesn’t have access to the latest published literature, so it can’t replace database searches. But as a thinking partner for the work you’ve already gathered? Nothing else came close.

Verdict: My most-used tool throughout this process. Start here for anything involving structure, clarity, or argument.

2. ChatGPT — Fast, Fluent, and Requires Supervision

ChatGPT earns its place as the second-most useful tool, but for different reasons than Claude.

Where I relied on Claude for depth, I used ChatGPT for speed. When I was stuck on how to phrase a methodological justification, or needed a first draft of a paragraph I could then reshape, ChatGPT got me moving. It writes quickly, adapts to tone when you ask it to, and handles the blank-page problem better than anything else I’ve tried.

I also used it to cross-check my understanding of pharmacological concepts when I wanted a quick plain-language explanation, the kind you’d ask a knowledgeable colleague rather than read in a textbook.

The surprise that caught me: I asked it to suggest recent studies on Hydroxygenkawanin’s renoprotective effects. It gave me four citations. When I searched for them in PubMed, two didn’t exist. The author names were real, the journal names looked right, but the papers themselves were fabricated.

I already knew this was a known issue. Seeing it happen with my own specific research topic still felt like a warning shot. In biomedical sciences, a fake citation isn’t an embarrassment, it’s a serious credibility problem.

Verdict: Excellent for drafting, paraphrasing, and unblocking yourself. Verify every single reference it produces before you use it anywhere.

3. DeepSeek — Underrated for Technical Literature

DeepSeek doesn’t get as much attention as the other tools, but for research-heavy queries it earned a place in my workflow.

I used it primarily for complex, technical questions the kind where I needed the answer grounded in scientific reasoning, not general knowledge. It handled mycotoxin biochemistry questions with more precision than I expected, and it was noticeably better than most free tools at staying in the technical register without slipping into oversimplified explanations.

It’s also more willing to say “this is uncertain” or “the literature is divided on this” than tools that tend to present everything with equal confidence. For a thesis student, that epistemic honesty matters.

The limitation: Its interface is less intuitive for document-level work. I used it mostly for specific queries, not for feedback on my writing.

Verdict: Worth using when you need technically precise answers or want a second opinion on a scientific claim. Better than it looks at first glance.

4. Perplexity AI — Good for Getting Oriented, Weak on Depth

Perplexity’s strength is that it shows you its sources in real time. Every answer comes with links, which means you can immediately evaluate whether what it’s telling you comes from a credible place.

For orientation, when I was entering a sub-area of the AFB1 literature I hadn’t read deeply yet, that transparency was genuinely useful. It gave me a fast map of who was publishing in an area and what the key debates were.

But when I pushed it toward the specific: the histopathological grading criteria for AFB1-induced renal injury, or the dose-response data in recent rat model studies it started reaching its limits. The answers became more general. The sources became less directly relevant.

Verdict: Use it early in your research process for orientation and discovery. Don’t rely on it for technical depth in a specialized field.

5. Grammarly — The Unglamorous One That Still Earns Its Place

There’s nothing exciting to say about Grammarly. It does what it says.

But I want to be honest about why it belongs on this list, because I almost left it out for being too obvious.

Academic writing in the biomedical sciences has a specific register. Sentences need to be precise without being tortured. Passive voice is sometimes correct and sometimes a crutch. Technical terms can’t be changed for the sake of variety. Grammarly, particularly the clarity and conciseness suggestions, helped me trim the wordiness that creeps into thesis writing when you’ve been staring at a section for too long.

It also catches the small errors that your brain stops seeing after the twentieth read: a missing article, a comma splice, a tense inconsistency in the methods section.

The limitation: The free tier is useful but deliberately limited. It flags issues without always explaining the best fix. And it occasionally suggests changes that would make the sentence less precise, not more, so judgment is still required.

Verdict: Run every section through it before your supervisor sees it. Not because it’s brilliant, but because it catches what you’re too tired to catch yourself.

What I Actually Learned

I didn’t expect this experiment to change how I think about AI tools. I thought it would just tell me which ones to keep open and which ones to close.

It told me something more useful: these tools work best when you know exactly what you’re asking them to do, and worst when you hand them responsibility for something that requires your own disciplinary judgment.

Claude didn’t understand my thesis. It understood structure, argument, and language. I brought science. The combination worked.

ChatGPT didn’t know what Hydroxygenkawanin was in the way my literature review knows it. But it could help me write about it more clearly once I gave it the context.

None of these tools replaced a single hour of real research. But together, they gave back hours I would have spent on the parts of the process that weren’t really the research, the blank page, the tangled paragraph, the argument that made sense in my head but not on the page.

With April 27th twelve days away, that’s not a small thing.

Quick Reference: What to Use When

Stuck on a draft or can’t find the opening sentence? → ChatGPT Need feedback on a full chapter or argument structure? → Claude Researching a technical concept you’re unfamiliar with? → DeepSeek New to a sub-topic and need a fast orientation? → Perplexity Final polish before submission? → Grammarly

None of them are a shortcut. All of them, used honestly, are worth your time.

Completing an M.Phil on AFB1-induced nephrotoxicity and the curative potential of Hydroxygenkawanin. Writing occasionally about research life, tools, and what graduate school actually looks like.

POSTS ACROSS THE NETWORK

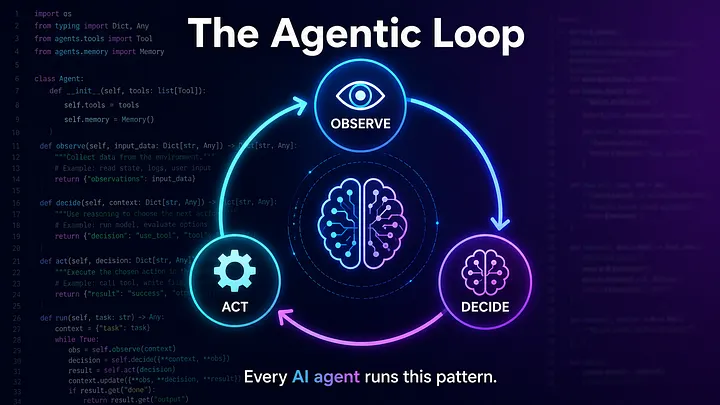

The Compounding Problem in Agentic AI Era

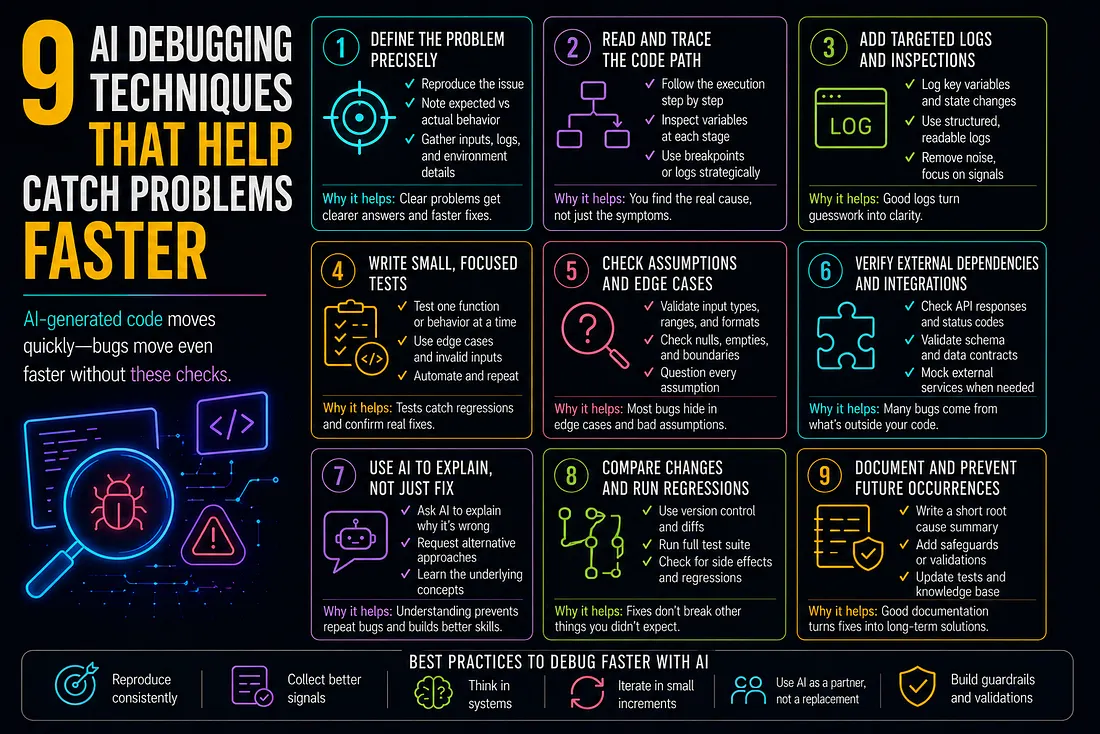

9 AI Debugging Techniques That Help Catch Problems Faster

How to Add SMS to Marketo Smart Campaigns Without Breaking Your Workflow

Best MCP Server for SEO in 2026: Guide for GEO, AEO, and SERM Experts

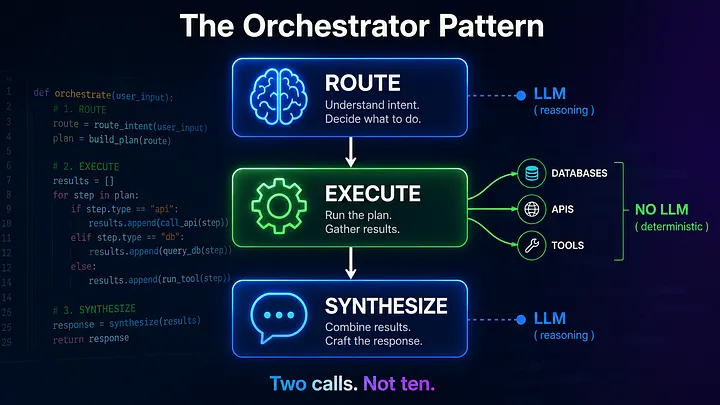

Beyond the Agentic Loop: The Orchestrator Pattern for Multi-Agent Systems