Generative AI tools have exploded into the workplace, promising to supercharge productivity. ChatGPT famously reached 100 million users faster than any app in history, and companies have poured billions into AI to turbocharge knowledge work. Need a report drafted or some code written? Just ask the algorithm. Proponents tout head-spinning gains: one MIT study found that tools like ChatGPT and GitHub Copilot help employees write everything from emails to blog posts 40% faster. In technical fields, generative coding assistants have cut programming time by over 50% hbr.org. Consulting firms like McKinsey see generative AI as the next productivity frontier, estimating it could add a whopping $2.6 to $4.4 trillion in value per year across industries. The potential is so vast that these models might automate tasks taking up 60–70% of workers’ time today, especially in roles heavy on text and data crunching mckinsey.com. No wonder knowledge workers are eagerly inviting their new AI “coworkers” into daily tasks.

The New Office Sidekick (That Never Sleeps)

For many professionals, generative AI has become a tireless assistant for the busywork of modern jobs. Writing and research: Instead of starting with a blank page, employees use ChatGPT to draft emails, marketing copy, or even first passes at project proposals. The AI can summarize dense research reports or extract key points from meeting transcripts in seconds. Presentations: Stuck on slide content? Knowledge workers are asking tools like ChatGPT to brainstorm catchy headlines or outline decks, and turning to image generators (hello, DALL·E) to whip up on-brand graphics for that quarterly report. Brainstorming: Need ideas for a product name or a fresh approach to a client problem? Generative AI can spit out dozens of suggestions at 3 AM without batting an eye. Indeed, humans teaming up with gen AI often produce higher-quality results in less time, whether it’s drafting a performance review or crafting a clever slide tagline. Early research confirms the upside: In an experiment at a consulting firm, consultants given GPT-4 assistance completed a proposal task dramatically faster and better — nearly a 40% performance boost compared to those working solo mitsloan.mit.edu. “It’s like having an intern who’s read the entire internet,” gushes the excited knowledge worker. Who wouldn’t want an extra pair of (digital) hands to handle the drudge work?

But as any manager will tell you, speedy output isn’t everything. Results matter — and this is where the AI story gets complicated.

Beware the Rise of “Workslop”

Imagine this: you’re in a high-stakes meeting, flipping through a colleague’s slick presentation. The slides look professional — bullet points crisp, language polished. But as you dig in, something feels off. The data seems vaguely familiar, the insights generic, and that key statistic? Utterly fabricated. Welcome to the era of “workslop” — low-quality, AI-generated output masquerading as real work. The term, coined to describe convincingly mediocre content churned out by AI, highlights a growing problem: these tools can produce polished nonsense that erodes trust and creates extra work. In other words, generative AI can confidently pump out B+ material that on closer inspection is more fluff than substance.

Researchers are finding this “fake productivity” all over. A recent study of over 1,000 employees (by folks at Stanford and MIT Media Lab) reported that 40% had to deal with AI-generated work that looked good but was basically empty — forcing them to spend time cleaning up the mess. All those auto-generated reports and summaries don’t actually save time if a coworker must decipher and redo them. In fact, workers said they burn nearly two hours vetting and fixing each instance of such workslop. Add it up, and that “busywork tax” cost an estimated $9 million a year in lost productivity for a 10,000-person company techcrunch.com. So much for the great efficiency boost — it’s hard to claim AI is making us more productive when teams are spending afternoons double-checking an algorithm’s half-baked deliverables.

It’s not just anecdotal grumbling; big-picture analyses are sounding alarms too. Despite the hype, most companies aren’t seeing the payoff from generative AI yet. One survey found 80% of firms using gen AI reported no significant bottom-line impact, and nearly half have already abandoned their AI initiatives as disappointments. Another MIT study famously concluded 95% of corporate AI pilots failed to show any tangible ROI theguardian.com. With stats like that, it’s no surprise that analysts have begun muttering about a coming reality check. (The Gartner folks even placed generative AI on the cusp of the “trough of disillusionment,” as early enthusiasm gives way to hard questions about implementation and return on investment aimagazine.com.)

In plain English: we’ve deployed a lot of flashy AI demos, but where’s the beef?

The disconnect often boils down to output vs outcome. Generative AI makes it absurdly easy to create content — emails, documents, code, you name it. Volume goes up; but does value? Skeptics point out that piling on more mediocre content can actually slow down an organization, as people sift through bloated inboxes and redundant reports. As one commentator quipped, AI sometimes seems to “accelerate the production of BS” — delivering results that look like work but don’t move work forward. If everyone is turning in AI-assisted papers, the bar for real insight and originality only rises.

Hyper-Efficient or Hyper-Shallow?

There’s also a more human cost lurking beneath the productivity stats. Sure, generative AI can crank out a decent first draft or answer, but what does that do to us, the workers? Early research suggests a double-edged sword. In one study, employees who collaborated with AI on a task did perform better and faster — but they ended up less motivated and more bored afterward hbr.org. By leaning on the AI, they got the job done, yet felt less ownership and engagement. It’s as if offloading too much to the machine took some of the meaning (or at least the mental stimulation) out of the work. Over time, this raises a worrying question: Are we saving time, only to create an army of disengaged button-pushers?

Workplace experts are already warning of a “shallow work” trap. Nicole Helmer, a VP at a learning platform, observes that if employees start outsourcing all their thinking to AI, they risk becoming “hyper-efficient but intellectually passive.” The AI handles the problem-solving, while humans mindlessly approve the output — not exactly a recipe for innovation or growth. Critical thinking could atrophy like an unused muscle. In a cheeky piece titled “Are Our Brains Rotting?”, one futurist outlet noted the concern that over-reliance on AI might dull our capacity for deep reasoning degreed.com. After all, if ChatGPT is always there to write the memo or suggest ideas, how often do we flex our own creative muscles? Some leaders even suggest this could lead to a “commodity” mindset for thinking skills — if everyone has access to the same AI-generated answers, genuine originality and expertise become all the more precious (and perhaps rare).

Interestingly, the productivity boost from AI tends to be bigger for less experienced workers than for experts. Novices get a huge leg-up from readily available knowledge and templated outputs. But seasoned pros sometimes find the AI more of a blocker on complex problems — or worse, a tempting crutch to avoid the hard work of truly understanding the issue. Researchers observed that when an AI’s advice was beyond its depth, many people would “switch off their brains and follow what AI recommends,” even when it was wrong. The result: a slick-looking answer that’s completely off-base. (In one experiment, participants using GPT confidently produced a flawed recommendation — but with a very well-written justification for it mitsloan.mit.edu. The content sounded great, but it was fundamentally incorrect.) This highlights a subtle danger: generative AI is very good at sounding competent. It can lull us into a false sense of security, encouraging a kind of intellectual laziness where we trust the polished output instead of double-checking facts or thinking critically. In the long run, that’s a recipe for poorer skills and more mistakes, hiding under a veneer of algorithmic eloquence.

Productivity Paradox: Finding the Right Balance

So, are tools like ChatGPT and DALL·E truly enhancing productivity for knowledge workers, or just creating the appearance of it? The truth — in classic knowledge-worker fashion — is nuanced. Yes, generative AI absolutely can save time and boost output. It’s fantastic for first drafts, quick research, boilerplate emails, and sparking ideas. It can translate a rough outline into a polished memo in minutes, or generate 10 variations of a tagline when your brain is fried at 6 p.m. These are real efficiency gains that many workers (often under deadline pressure) gratefully embrace. In fact, a kind of shadow productivity revolution is happening: employees often adopt AI tools on their own, under management’s radar, precisely because they do feel it helps them get work done faster. The “grassroots” uptake of ChatGPT at work — even when the bosses haven’t officially approved it — suggests that individuals are finding genuine value in it for their day-to-day tasks.

However, productivity isn’t just about speed — it’s also about outcomes and quality. And here is where generative AI can mislead. It’s all too easy to churn out pages of content or lines of code and declare victory, without asking: did this actually achieve what we needed? As the Guardian dryly noted, plenty of firms have pressed the AI turbo button only to find no real impact on the bottom line theguardian.com. If an AI-written report requires an hour of human editing to fix inaccuracies, have you really gained anything? If every employee is suddenly flooding Slack with AI-generated status updates, is the team more informed — or just more inundated? The tension between perceived efficiency and actual impact is the crux of the matter. Generative AI can make us feel productive — cranking out deliverables at all hours — yet those outputs might be superficial or off-target, creating as many follow-up tasks as they finish.

The path forward, according to thought leaders, is to use AI deliberately, not indiscriminately. That means treating generative AI as a collaborator or creative aide — not an infallible oracle or a replacement for human judgment. Organizations seeing success tend to set clear guidelines and invest in training: they encourage employees to leverage AI for what it does best (speed and breadth) while insisting on human oversight for what humans do best (context, nuance, ethical and critical thinking.) In practical terms, that might mean using ChatGPT to generate a list of ideas, then having a team vet and refine those ideas. Or letting an AI draft an analysis outline, but requiring a person to fill in the insight and double-check facts. I’ve seen some companies are even analyzing the “shadow AI” usage patterns among their staff to see where these tools truly add value, then officially adopting those use cases.

Far from replacing knowledge workers, the most effective use of AI augments them. Think of it as a power tool — it can accelerate work, but you still need skill to aim it correctly. As one Harvard study put it, the best results come when humans apply cognitive effort and judgment alongside AI, rather than blindly accepting the AI’s output mitsloan.mit.edu. In other words, keep your brain in the loop. Generative AI can handle the grunt work of generating options or boilerplate text, freeing you to do the higher-order thinking and decision-making. When used this way, it does tend to meaningfully improve quality and save time. The key is not letting the glitter of effortless output blind you to its limitations.

At the end of the day, generative AI is a tool — a very powerful one, but still just a tool. Like any tool, its impact depends on how we wield it. It can amplify productivity or amplify pretend productivity. It can be a shortcut to valuable results, or a shortcut to looking busy. The early evidence from the field is mixed for a reason: some have figured out how to turn AI into a true ally at work, while others are drowning in a flood of AI-generated spam. The challenge now for knowledge workers (and their bosses) is to close that gap. With a bit of strategy, a dash of skepticism, and yes, some old-fashioned human creativity, we can ensure that our robot assistants actually make us more effective — and not just efficient at creating noise. In the end, the promise of generative AI is real, but so is the peril of performative productivity. Striking the balance will determine whether these tools become a genuine engine of knowledge work — or just the latest shiny object that kept us busy without making a difference. The choice, it seems, is ours.

POSTS ACROSS THE NETWORK

Best AI Blogs for Developers in 2026 (What’s Actually Worth Reading)

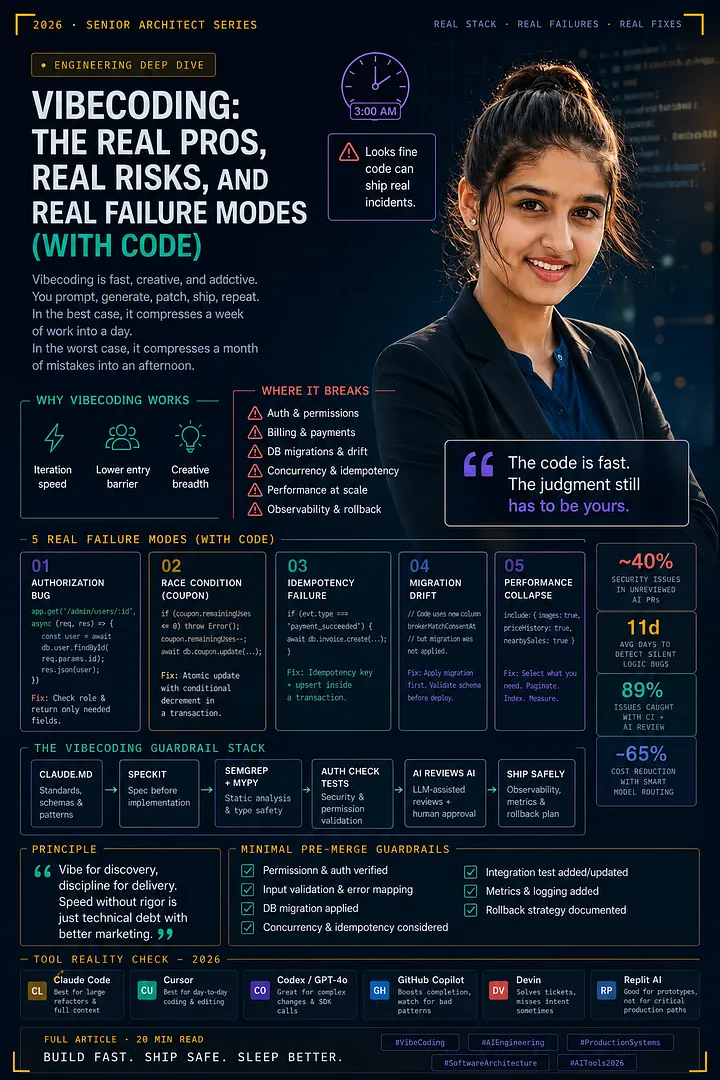

Vibecoding: The Real Pros, Real Risks, and Real Failure Modes (With Code)