The Hidden Buying Committee in AI Infrastructure Deals

Many AI infrastructure founders assume their buyer is simple.

If the product helps developers, the buyer must be developers.

If the product improves model performance, the buyer must be the AI team.

If the product optimizes GPUs, the buyer must be engineering leadership.

Technically, all of this is true.

But in enterprise companies, the people who use a product are rarely the only people deciding whether it gets adopted.

And this is where many AI startups get stuck.

Because the real buying committee for AI infrastructure is often much larger than founders expect.

Security | Finance — AI Tool — DevOps | Developers

The Default Assumption: “Developers Will Drive Adoption”

Most AI infrastructure products are designed for developers.

Examples include tools for:

- prompt management

- model evaluation

- LLM observability

- vector database optimization

- inference pipelines

- GPU orchestration

So the natural assumption is:

“If developers love it, adoption will happen.”

In early experiments or startup environments, this sometimes works.

But inside large companies, developer enthusiasm alone rarely unlocks infrastructure purchases.

Why?

Because AI systems create risks that extend beyond engineering teams.

The Hidden Stakeholders

When an enterprise deploys AI systems at scale, multiple teams become involved.

Not just the engineers building the models.

A typical AI infrastructure decision might quietly involve:

Engineering teams They evaluate technical fit, performance, and integration effort.

Platform or DevOps teams They worry about reliability, observability, and operational overhead.

Security teams They evaluate how models interact with internal data.

Legal and compliance teams They review potential regulatory exposure and data handling practices.

Finance or infrastructure leaders They care about compute spending, GPU usage, and long-term cost structure.

Each group sees the AI system from a completely different angle.

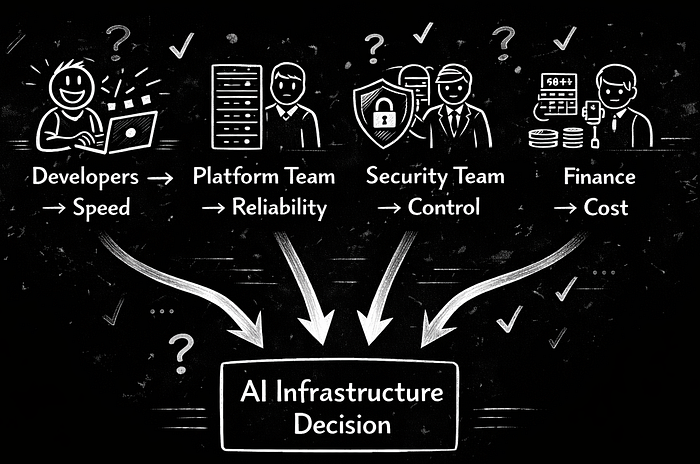

Developers → Speed

Platform Team → Reliability

Security Team → Control

Finance → Cost

↓

AI Infrastructure Decision

Which means the product is not just solving a developer problem.

It is entering a multi-team operational system.

Why This Creates Positioning Problems

Many AI startups position their product around developer productivity.

Typical messaging sounds like:

“We help developers build AI applications faster.”

or

“We simplify building with LLMs.”

That message resonates with engineers.

But it often fails to answer questions from other stakeholders like:

- Does this increase infrastructure cost?

- Does this introduce new security risks?

- How reliable will these systems be in production?

- Who will maintain this long term?

When those questions remain unclear, the deal slows down.

Not because the product is weak.

But because the buying committee is larger than the narrative.

A Real-World Example: GPU Infrastructure

Consider companies that provide GPU infrastructure for AI workloads.

One well-known example is CoreWeave.

At first glance, the product seems simple:

on-demand GPU infrastructure optimized for AI workloads.

Engineers care about performance and availability.

But in practice, the decision to use a provider like CoreWeave often involves several stakeholders:

Engineering teams want faster model training.

Infrastructure teams want stable, scalable compute resources.

Finance teams want to understand how GPU usage affects overall cloud spending.

Leadership wants confidence that the company won’t be locked into an unsustainable cost structure.

The purchase is not driven by a single group.

It is shaped by a combination of technical and economic concerns.

That’s why the narrative around AI infrastructure often includes themes like:

- compute efficiency

- reliability at scale

- infrastructure flexibility

Not just developer convenience.

The Pattern Across AI Infrastructure

This dynamic appears repeatedly across the AI ecosystem.

Tools that start as developer utilities often expand into broader operational systems.

Examples include companies like:

- Weights & Biases helping teams track and manage machine learning experiments

- Databricks providing data infrastructure for large-scale analytics and AI workloads

Both companies began with products aimed at technical users.

But their long-term growth depended on appealing to multiple stakeholders across the organization.

Because once AI systems reach production, they stop being purely engineering problems.

They become business infrastructure.

What This Means for Founders

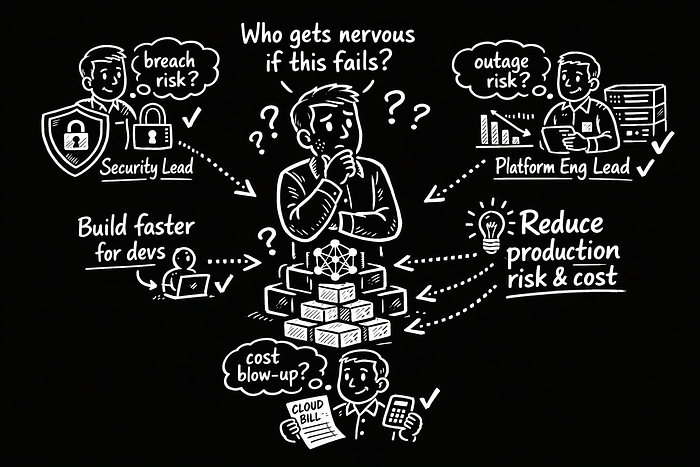

If you’re building an AI infrastructure startup, one question matters more than most founders expect:

Who becomes nervous if this system fails?

That group often has influence in the buying process.

Sometimes it’s:

- the security team

- the platform engineering team

- the finance team monitoring compute spend

When founders understand this broader set of stakeholders, their positioning changes.

Instead of saying:

“We help developers build AI faster.”

The narrative might become:

“We reduce the operational risk of running AI systems in production.”

Or:

“We control the infrastructure costs of large-scale AI workloads.”

Same product.

Different lens.

But one speaks to a wider group of decision makers.

The Real Insight

AI infrastructure deals are rarely won by convincing just one team.

They are won by aligning the concerns of several groups that each see the system differently.

Developers want speed.

Platform teams want reliability.

Security teams want control.

Finance wants predictable costs.

The strongest infrastructure companies understand this dynamic early.

And they position their product in a way that addresses the entire system around AI, not just the developers building it.

Because once AI becomes production infrastructure, the buying committee quietly grows.

And founders who recognize that shift are much better prepared to sell into real enterprises.

For founders building AI infrastructure, the challenge isn’t just building a great product — it’s positioning that product so every stakeholder in the system understands why it matters.

POSTS ACROSS THE NETWORK

Stop Getting Blocked: A Practical Blueprint for a Resilient Scraping Pipeline

The Problem with “Please Do Not Copy” in PDFs and Documents

The Ultimate Tech Swag List for Tech Professionals / Developers (2026)

Boost Your MySQL Debugging Skills with the General Query Log

Web Developers Are Using AI More Than Ever—and Worry It Could Cost Them Their Jobs