The Quiet Shift From Model Safety to Execution Safety: What IMDA’s Agentic AI Framework Signals for Blockchain and Enterprise AI

For the last few years, the AI industry has been obsessed with models.

Bigger models. Safer models. Better prompts. Alignment debates have dominated conferences, research papers, and product roadmaps. Entire ecosystems have been built around training data, guardrails, and orchestration layers designed to keep intelligent systems predictable.

Researchers debated hallucinations. Ethicists debated bias. Engineers debated context windows. The whole industry stared at the model as if it were the only layer that mattered.

But something important has changed.

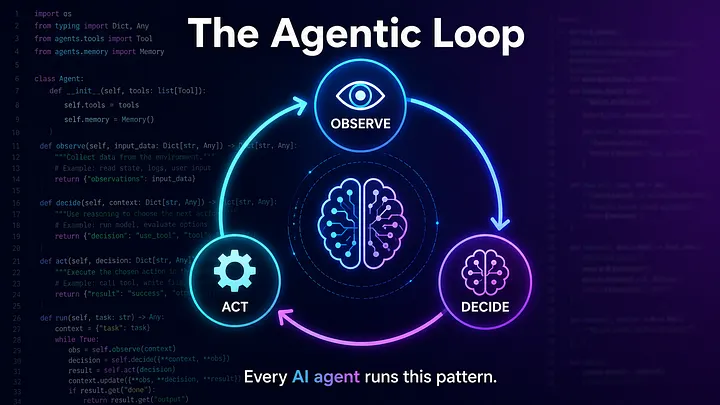

AI is no longer just generating text, images, or code. It is beginning to act.

Agents are booking meetings, executing workflows, triggering API calls, managing infrastructure, and increasingly interacting with financial systems. The conversation is quietly shifting away from what does the model say toward what does the system do. And that is a fundamentally different question, with fundamentally different stakes.

Why Singapore’s Framework Matters More Than Most Realize

Singapore’s Infocomm Media Development Authority (IMDA) recently released its Model AI Governance Framework for Agentic AI. At first glance, it reads like another policy document. But beneath the governance language is something more significant.

The framework doesn’t focus primarily on training data or alignment philosophy. Instead, it addresses what happens when AI agents begin operating in the real world. It points toward identity, permissions, traceability, and technical controls as core requirements for agentic systems.

This is not just compliance thinking. It is architectural signaling.

The IMDA framework suggests that the industry is moving from model safety toward execution safety. The difference may sound subtle, but it changes how systems need to be designed.

The Problem Most Safety Discussions Still Miss

Much of today’s AI safety conversation lives at the reasoning layer. Developers refine prompts. Teams build moderation pipelines. Red teams probe model behavior. These approaches matter, but they assume that risk lives inside the model’s output.

Agentic systems break that assumption.

Once an AI can access tools, call external services, initiate transactions, or modify infrastructure, risk moves into the execution layer. A perfectly aligned model can still send funds to the wrong destination if execution boundaries are unclear. A carefully designed prompt cannot stop an autonomous workflow from drifting outside its intended scope when context changes mid-process.

The IMDA framework addresses this shift directly. It identifies cascading system-level risks, where one agent’s decision propagates through an entire workflow, and unpredictable outcomes that emerge from dynamic interactions between agents and external tools.

These are not hypothetical concerns. They are system design realities.

What the Framework Is Really Signaling

The document organizes governance around four pillars, but the deeper message lies in how those pillars reshape architecture.

First, it emphasizes defining an agent’s action-space at the design stage. Not every agent should have the same capabilities. Systems must distinguish between agents that read data and those that can modify or execute actions.

Second, it acknowledges that human oversight changes when automation scales. Continuous human review becomes impractical, so systems must introduce meaningful checkpoints where irreversible actions require verification.

Third, it highlights the need for technical controls across the entire lifecycle of an agent. Monitoring, identity binding, scoped permissions, and execution checkpoints are not optional features. They are core infrastructure.

Finally, it distributes responsibility across builders, enterprises, and end users. Governance becomes a shared system property rather than a top-down policy.

Read carefully, this framework sounds less like a compliance checklist and more like a blueprint for how enterprise AI systems will need to operate.

The Blockchain Angle Most People Are Missing

Although the framework does not focus on blockchain technology, its implications align directly with trends emerging in digital asset infrastructure.

For years, blockchain development has emphasized performance: faster settlement, higher throughput, and tokenized assets moving across programmable rails. But speed alone does not create trust. In fact, faster rails often increase execution risk.

Programmable financial systems assume that actions executed on-chain represent valid intent. Smart contracts settle transactions automatically. DeFi protocols operate continuously. These properties become more complex when autonomous agents enter the picture.

A private key proves control. It does not prove intent. It does not confirm that an autonomous action stayed within its authorized scope or that the underlying decision context remained valid.

The IMDA framework quietly points toward a future where governance moves closer to the execution layer itself. The next phase of infrastructure will likely introduce verification primitives that sit between agent reasoning and irreversible action, ensuring that autonomy remains bounded even as systems scale.

For builders working on tokenization, settlement networks, or AI-driven finance, this shift changes the architectural conversation. Performance becomes only one dimension of trust. Execution integrity becomes another.

The Real Engineering Challenge

For enterprise development teams, the implications are immediate.

Traditional wallet architectures and API integrations were designed for human operators. A user initiates an action, reviews the result, and remains accountable. Agentic systems introduce parallel autonomous workflows, extended execution timelines, and emergent risks that arise from interactions between agents.

Developers now face new questions. How should permissions be scoped when multiple agents operate simultaneously? How can teams reconstruct an agent’s decision path weeks after execution? What happens when an agent acts within allowed parameters but produces unintended outcomes because external conditions shifted?

Existing standards such as MCP and A2A are evolving quickly, but testing methodologies and audit structures for autonomous execution are still maturing. The framework does not prescribe technical implementations, yet it clarifies that execution-layer governance is no longer optional for enterprise deployments.

Where Infrastructure Is Heading

The broader takeaway is not that regulation is tightening. It is that architecture is evolving to match capability.

AI systems are moving from tools that assist humans toward systems that act with delegated authority. Governance is becoming programmable. Execution is becoming accountable. Infrastructure is being shaped around the assumption that autonomous agents will soon participate directly in financial and operational workflows.

Paradoxically, this shift may accelerate adoption rather than slow it down. When enterprises can verify that autonomous actions are bounded, traceable, and controllable at runtime, confidence increases. The risk surface becomes visible instead of opaque. Institutions become more willing to deploy AI into high-value environments.

The Signal in the Noise

The IMDA Model AI Governance Framework for Agentic AI will be cited in policy discussions for years, but its deeper value lies in what it signals to builders.

The next moat in AI will not be defined solely by model intelligence. It will be defined by execution integrity.

The teams that learn how to verify intent at the moment of action, maintain auditable histories of autonomous decisions, and design systems where autonomy and accountability grow together will shape the next generation of enterprise infrastructure.

The quiet shift is already underway. The real question is whether you are building for the architecture that is fading, or the one that is just beginning.

The IMDA Model AI Governance Framework for Agentic AI is publicly available at:

If you are building at the intersection of AI, blockchain, and enterprise infrastructure, the primary source is worth reading in full.

About the Author

Jonathan Capriola is the founder of AI Blockchain Ventures and the inventor of A2SPA (Agent to Secure Payload Authorization), an execution layer protocol focused on verifying autonomous AI actions at runtime. His work explores how agentic systems, blockchain infrastructure, and enterprise AI development converge as automation moves from reasoning into real world execution.

Much of his writing focuses on the architectural shift toward execution integrity, delegated authority, and verifiable autonomy in financial and enterprise environments.

Learn more about A2SPA and execution layer security here:

POSTS ACROSS THE NETWORK

The Compounding Problem in Agentic AI Era

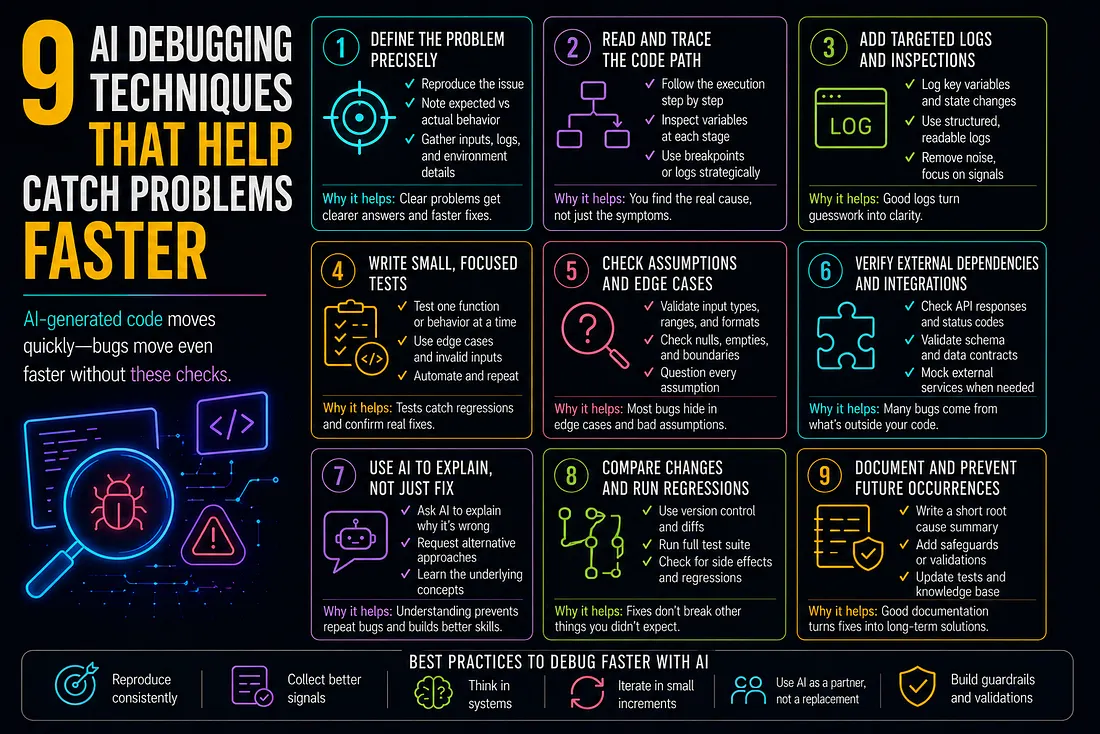

9 AI Debugging Techniques That Help Catch Problems Faster

How to Add SMS to Marketo Smart Campaigns Without Breaking Your Workflow

Best MCP Server for SEO in 2026: Guide for GEO, AEO, and SERM Experts

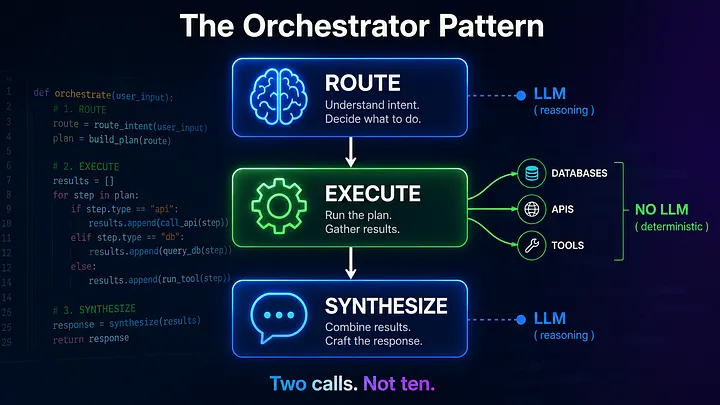

Beyond the Agentic Loop: The Orchestrator Pattern for Multi-Agent Systems