Artificial intelligence crossed a critical boundary:

it moved from generating information

to causing consequences.

That transition changes everything.

For the first time in computing history, probabilistic systems hold real operational authority — over financial transactions, infrastructure, healthcare workflows, industrial controls, and autonomous commerce.

The industry invested heavily in model intelligence, reasoning quality, orchestration, and observability.

Almost nobody focused on the most important boundary in autonomous systems: the execution layer.

The Core Problem: Probabilistic Intelligence Meets Deterministic Reality

Large language models are probabilistic systems. They generate outputs through probabilistic inference. Outputs vary. Reasoning varies. Execution paths vary. Emergent behaviors occur.

But the systems they act on are deterministic — payment rails, APIs, databases, cloud infrastructure, authentication systems, industrial controls.

This creates a fundamental mismatch: a probabilistic system triggering deterministic consequences.

That mismatch is the scientific foundation for why the execution layer must exist.

The Execution Layer Defined

The AI execution layer is the deterministic enforcement boundary that verifies whether an AI-generated action is authorized before execution occurs.

It sits between AI reasoning and real-world consequence.

It is not model alignment. It is not observability. It is not governance dashboards or post-execution logging.

It is a runtime enforcement system with one purpose: prevent unauthorized execution from becoming a valid system state.

Two Domains. One Boundary.

The most important principle behind the execution layer is the separation of deterministic control from probabilistic generation.

The model may remain probabilistic. Execution cannot.

This is the same architectural logic behind CPU privilege boundaries, memory protection, and cryptographic verification. The execution layer acts as a cryptographic gate between uncertainty and consequence.

Where Existing Primitives Fail

In distributed systems, trust boundaries define where assumptions stop.

TLS protected transport. OAuth protected identity delegation. RBAC protected permissions.

But AI systems introduced a problem none of these were designed for: an authenticated agent can still generate unauthorized execution paths dynamically.

Identity is insufficient. Transport security is insufficient. Observability is insufficient.

The execution layer introduces a new trust primitive: runtime execution authorization. Not who the agent is. Not where the request came from. Whether this exact action should exist at this exact moment.

Runtime Verification as a Constrained State Machine

Before execution, the layer verifies payload integrity, authorization scope, parameters, destination, freshness, replay state, and permission boundaries.

This transforms execution into a constrained state machine.

Without it: execution becomes optimistic, agents drift, policies become advisory, toolchains become trust assumptions.

With it: invalid actions fail closed. Authority is cryptographically constrained.

Entropy Reduction in Autonomous Systems

Autonomous systems naturally increase entropy. As agents gain memory, tools, recursion, delegation, and multi-agent communication, possible execution paths grow exponentially.

The execution layer reduces that entropy by constraining the valid execution state space — the same way transaction validation works in distributed ledgers, or privilege enforcement works in kernel architecture.

That reduction is essential for safe scalability.

Fail-Closed Is Not Optional

Secure systems fail closed. If verification fails, execution does not occur.

Most current AI systems are fail-open: tool retries, fallback execution, permissive orchestration, partial validation. This is dangerous at scale.

The execution layer enforces fail-closed behavior by design. No valid authorization. No execution path.

The critical property is that execution itself becomes dependent on verification success.

Verification is not advisory.

It is existential to execution.

That is the difference between logging, governance, and observability on one side — and true execution enforcement on the other. Security becomes prevention, not observation.

Non-Repudiation and Accountability

The execution layer introduces cryptographic non-repudiation into AI systems. Actions become provably authorized. Execution becomes attributable. Authority chains become verifiable.

This matters for regulation, compliance, liability, and enterprise governance — especially as AI systems gain operational authority over consequential workflows.

Execution gains measurable provenance. That becomes the audit record.

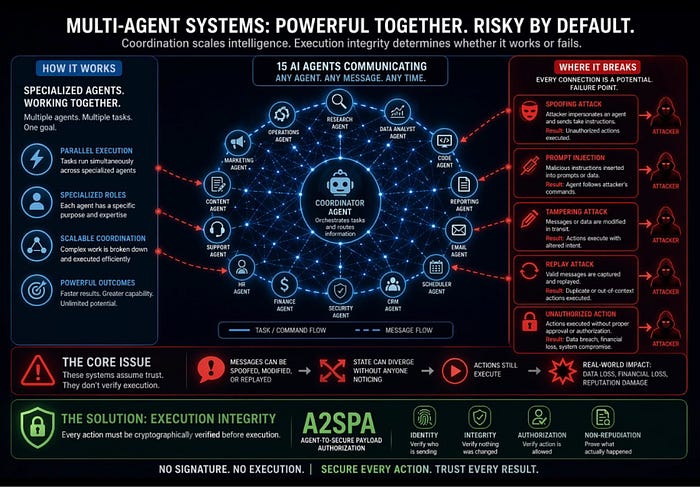

Multi-Agent Systems and Cascading Risk

Multi-agent systems amplify execution risk. An agent may delegate, chain actions, call external agents, invoke plugins, trigger recursive workflows.

Without execution verification, trust propagates implicitly. Authority becomes transitive. Unintended execution chains emerge.

The execution layer interrupts uncontrolled trust propagation. Every execution step independently validates authorization. Trust stays bounded.

What the Execution Layer Actually Looks Like

A verified execution layer operates through cryptographically signed execution payloads — deterministic authorization objects that bind:

• the action

• the exact parameters

• the authority scope

• the initiating principal

• freshness constraints

• replay protection

into a single verifiable execution state.

Protocols like A2SPA (Agent-to-Secure Payload Authorization) implement this at the action level — making it possible to verify not just who an agent is, but whether this specific action, with these specific parameters, was legitimately authorized to execute right now.

Identity without execution verification is incomplete security.

Identity answers who.

The execution layer answers what is allowed to execute.

The Execution Layer Is Infrastructure

Networking. Transport. Identity. Permissions. Orchestration.

Every generation of computing added a foundational layer. AI systems now require one more: execution verification.

This is not a security product category. It is an infrastructure primitive.

Once AI systems move from generating information to causing consequences, the execution boundary becomes the most important control point in the architecture.

The Future Is Controlled Execution

The future of AI is not just smarter models. It is verified execution.

As intelligence scales, execution risk scales faster. Without deterministic execution verification, autonomous systems eventually become ungovernable.

The execution layer exists to solve that problem — not through alignment promises, not through dashboards, not through monitoring after damage occurs.

Through one rule:

Unauthorized execution must never become a valid runtime state.

In autonomous systems, intelligence without execution control eventually becomes indistinguishable from instability.

The execution layer exists to prevent that outcome.

POSTS ACROSS THE NETWORK

Is Sawgrass SG1000 Ink the Right Choice for High-Volume Sublimation Work?

The Role of Frameless LED Displays in Store Design Strategy

When Workflows Need a Custom AI Software Development Company Before They Become Products

AWS Kiro: Moving Beyond Prompt-to-Code Development