Vibe Coding Is Breaking Production — And Nobody Wants to Talk About It

Developers are shipping AI-generated code they don’t understand. It works — until it catastrophically doesn’t. Here’s what’s actually going wrong, and how to use AI without building on quicksand.

There’s a term going viral in developer circles right now: vibe coding.

It means exactly what it sounds like. You open Claude or Copilot, describe roughly what you want, accept what comes back, and ship it. No deep reading. No understanding the architecture. Just vibes.

And honestly? It works. Until it doesn’t.

Developers are generating full Next.js apps with Claude but can’t explain async/await, or debug a simple issue using the network tab. Developers treating AI like a magic wand are building on quicksand — and you don’t really know how screwed you are until it’s too late. Trending Topics

I’ve seen this up close. A startup I consulted for last month had an authentication system entirely written by AI. It passed all the tests. It looked clean. It was also silently storing plaintext passwords in a logging table that nobody knew existed. The AI had added debug logging during generation and never removed it.

It had been in production for four months.

This isn’t an anti-AI story. AI coding tools are genuinely transformative — I use them every day. This is a story about the gap between using AI and understanding what AI gives you. And right now, that gap is getting people fired, getting companies breached, and quietly causing production disasters everywhere.

What’s Actually Going Wrong

1. Silent Security Holes

The widespread adoption of AI coding tools is shaping the cybersecurity environment in 2026, as hackers increasingly exploit weaknesses in hastily produced vibe-coded software. Etcjournal

The irony is sharp. AI writes the code faster than ever. Attackers exploit the holes faster than ever. The net result for teams that don’t review what AI generates is a perfect storm.

Here’s a real pattern. Ask any AI to “create a user login endpoint” and you’ll likely get something like this:

# What AI often generates — looks fine at first glance

from flask import Flask, request, jsonify

import sqlite3

app = Flask(__name__)

@app.route("/login", methods=["POST"])

def login():

username = request.json["username"]

password = request.json["password"]

conn = sqlite3.connect("users.db")

cursor = conn.cursor()

# 🚨 SQL INJECTION — user input directly in query

query = f"SELECT * FROM users WHERE username='{username}' AND password='{password}'"

cursor.execute(query)

user = cursor.fetchone()

if user:

# 🚨 No rate limiting — brute force trivially easy

# 🚨 No token — session management missing entirely

return jsonify({"status": "logged in", "user": user})

return jsonify({"status": "failed"}), 401

Three critical vulnerabilities. All generated confidently. All look reasonable to someone who just wanted a login endpoint and didn’t scrutinise the output.

Here’s what it should look like:

from flask import Flask, request, jsonify

from flask_limiter import Limiter

from flask_limiter.util import get_remote_address

import sqlite3

import bcrypt

import jwt

import os

from datetime import datetime, timedelta

app = Flask(__name__)

SECRET_KEY = os.environ["JWT_SECRET_KEY"] # Never hardcoded

# Rate limiter — max 5 login attempts per minute per IP

limiter = Limiter(app=app, key_func=get_remote_address)

@app.route("/login", methods=["POST"])

@limiter.limit("5 per minute")

def login():

data = request.get_json()

if not data or "username" not in data or "password" not in data:

return jsonify({"error": "Missing credentials"}), 400

username = data["username"]

password = data["password"]

conn = sqlite3.connect("users.db")

cursor = conn.cursor()

# Parameterised query — SQL injection impossible

cursor.execute(

"SELECT id, password_hash FROM users WHERE username = ?",

(username,)

)

user = cursor.fetchone()

conn.close()

# Timing-safe comparison — prevents timing attacks

if not user or not bcrypt.checkpw(password.encode(), user[1].encode()):

return jsonify({"error": "Invalid credentials"}), 401

# Return a signed JWT — not raw user data

token = jwt.encode(

{

"user_id": user[0],

"exp": datetime.utcnow() + timedelta(hours=24)

},

SECRET_KEY,

algorithm="HS256"

)

return jsonify({"token": token}), 200

The difference between these two functions is the difference between “it works in testing” and “it survives contact with the real world.” 2. Supply Chain Attacks Are Quadrupling

This one is less about the code AI writes and more about the packages AI confidently tells you to install.

Over the past five years, major supply chain and third-party breaches have increased sharply, with incidents quadrupling. IBM X-Force also observed a 44% year-over-year increase in the exploitation of public-facing applications — a risk amplified by supply chain attacks targeting development ecosystems and trusted infrastructure. Prompt Nest

AI models recommend packages based on training data. That training data has a cutoff. A package that was safe and popular when the model was trained may now be abandoned, compromised, or replaced by a typosquatted malicious clone.

Here’s a Python script that validates every package your AI suggests before you install it:

import requests

import subprocess

import json

from datetime import datetime, timezone

def audit_package(package_name: str) -> dict:

"""

Before installing any AI-recommended package,

run this. It checks:

- PyPI existence and download stats

- Days since last update

- Known vulnerabilities via pip-audit

- Whether the name looks like a typosquat

"""

result = {

"package": package_name,

"safe_to_install": True,

"warnings": [],

"verdict": ""

}

# 1. Check PyPI metadata

try:

resp = requests.get(

f"https://pypi.org/pypi/{package_name}/json",

timeout=5

)

if resp.status_code == 404:

result["safe_to_install"] = False

result["warnings"].append("❌ Package not found on PyPI — possible hallucination")

result["verdict"] = "DO NOT INSTALL"

return result

data = resp.json()

info = data["info"]

# Check last release date

releases = data.get("releases", {})

if releases:

latest_version = info["version"]

latest_release_dates = releases.get(latest_version, [])

if latest_release_dates:

upload_time = latest_release_dates[-1]["upload_time"]

release_date = datetime.fromisoformat(upload_time)

days_old = (datetime.now() - release_date).days

if days_old > 730: # 2 years

result["warnings"].append(

f"⚠️ Last updated {days_old} days ago — possibly abandoned"

)

# Check download stats (low downloads = suspicious)

downloads_resp = requests.get(

f"https://pypistats.org/api/packages/{package_name}/recent",

timeout=5

)

if downloads_resp.status_code == 200:

monthly = downloads_resp.json().get("data", {}).get("last_month", 0)

if monthly < 1000:

result["warnings"].append(

f"⚠️ Only {monthly:,} downloads last month — low popularity"

)

except requests.RequestException as e:

result["warnings"].append(f"⚠️ Could not reach PyPI: {e}")

# 2. Check for known CVEs via pip-audit

try:

audit = subprocess.run(

["pip-audit", "--package", package_name, "--format", "json"],

capture_output=True, text=True, timeout=30

)

if audit.returncode != 0:

audit_data = json.loads(audit.stdout or "[]")

if audit_data:

result["safe_to_install"] = False

result["warnings"].append(

f"🚨 {len(audit_data)} known CVE(s) found"

)

except (subprocess.TimeoutExpired, json.JSONDecodeError, FileNotFoundError):

result["warnings"].append("⚠️ pip-audit not available — install with: pip install pip-audit")

# Final verdict

if not result["safe_to_install"]:

result["verdict"] = "🚨 DO NOT INSTALL"

elif result["warnings"]:

result["verdict"] = "⚠️ INSTALL WITH CAUTION — review warnings"

else:

result["verdict"] = "✅ Looks safe to install"

return result

def audit_requirements(packages: list[str]) -> None:

print(f"\n{'='*55}")

print(f" Package Safety Audit")

print(f" Checking {len(packages)} package(s)...")

print(f"{'='*55}\n")

for pkg in packages:

report = audit_package(pkg)

print(f" 📦 {report['package']}")

print(f" {report['verdict']}")

for warning in report["warnings"]:

print(f" {warning}")

print()

# Before installing anything AI recommended

audit_requirements([

"requests",

"fastapi",

"flask",

"some-random-package-ai-suggested"

])

Run this on every package your AI assistant recommends. One compromised dependency in your supply chain can expose every user of your application.

3. The Memory and RAM Crisis Nobody Is Talking About

Here’s a problem hitting developers right now that has nothing to do with code quality — it’s infrastructure.

The Verge reports a worsening RAM shortage in 2026, with knock-on effects touching everything from smartphones and laptops to gaming hardware — especially as AI features increase baseline memory requirements and data-centre demand competes for similar components. TechCrunch

What does this mean practically? The ML models you’re trying to run locally are getting bigger. The inference costs in the cloud are quietly creeping up. And the “minimum viable hardware” for AI-powered applications is rising every quarter.

Here’s a Python memory profiler you should be running on any AI-heavy workload right now:

import tracemalloc

import functools

import time

from typing import Callable

def memory_profile(func: Callable) -> Callable:

"""

Decorator that profiles memory usage and execution time.

Wrap any function you suspect is leaking or using too much memory.

"""

@functools.wraps(func)

def wrapper(*args, **kwargs):

tracemalloc.start()

start_time = time.perf_counter()

result = func(*args, **kwargs)

elapsed = time.perf_counter() - start_time

current, peak = tracemalloc.get_traced_memory()

tracemalloc.stop()

print(f"\n📊 Memory Profile: {func.__name__}")

print(f" Current usage : {current / 1024:.1f} KB")

print(f" Peak usage : {peak / 1024:.1f} KB")

print(f" Execution time: {elapsed:.4f}s")

if peak > 100 * 1024 * 1024: # 100MB

print(f" ⚠️ WARNING: Peak usage exceeded 100MB")

return result

return wrapper

# Example — profile a document processing pipeline

@memory_profile

def process_documents(file_paths: list[str]) -> list[str]:

"""

Any AI-heavy workload.

Find out exactly how much memory it's actually consuming.

"""

results = []

for path in file_paths:

# Simulate document processing

with open(path, "r", errors="ignore") as f:

content = f.read()

# Your AI processing here

results.append(f"Processed: {path} ({len(content)} chars)")

return results

In a world where RAM is getting expensive and inference costs are rising, understanding your memory footprint is no longer optional. How to Use AI Without Building on Quicksand

This isn’t about using AI less. It’s about using it smarter. Three rules I’ve landed on after a year of building AI-assisted systems:

Rule 1: Never ship code you can’t explain line by line. If an AI generates 50 lines and you understand 40 of them, stop. Ask the AI to explain the other 10 before you move on. The explanation itself usually surfaces the problem.

Rule 2: Make AI generate the tests first. Ask for the test suite before the implementation. Tests make explicit what the code is supposed to do — and they catch the security and logic holes that confident-looking generated code tends to hide.

# Ask your AI: "Write tests for a login endpoint first.

# Don't write the implementation yet."

import pytest

def test_login_rejects_sql_injection():

payload = {"username": "' OR 1=1 --", "password": "anything"}

response = client.post("/login", json=payload)

assert response.status_code == 401 # Must reject, not log in

def test_login_rate_limits_brute_force():

for _ in range(10):

client.post("/login", json={"username": "admin", "password": "wrong"})

response = client.post("/login", json={"username": "admin", "password": "wrong"})

assert response.status_code == 429 # Must throttle after limit

def test_login_returns_token_not_user_data():

response = client.post("/login", json={"username": "valid", "password": "correct"})

assert "token" in response.json()

assert "password" not in response.json() # Never return sensitive fields

When you write the tests first, the AI has to satisfy them. Suddenly the security requirements are explicit and the generated code is accountable to something concrete.

Rule 3: Run an automated audit on everything before it merges.

# .github/workflows/ai-code-review.yml

# Runs on every PR — catches what vibe coding misses

name: AI Code Safety Audit

on: [pull_request]

jobs:

audit:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v3

- name: Install audit tools

run: pip install bandit pip-audit semgrep

- name: Security static analysis

run: bandit -r . -ll -x ./tests

- name: Dependency vulnerability check

run: pip-audit --progress-spinner off

- name: OWASP pattern scan

run: semgrep --config p/owasp-top-ten .

# PR cannot merge if any of these fail

# The AI generates the code. The pipeline reviews it.

This is the system that catches the plaintext password logging table. Automatically. Before it ships. Every time.

The Bottom Line

Companies building systems and workflows with agents will be the winners in 2026. Trending Topics That’s true. But the companies that win won’t be the ones who shipped the most AI-generated code. They’ll be the ones who shipped AI-generated code they actually understood, tested, and secured.

Vibe coding is real. The productivity gains are real. The risks are equally real.

The developers who thrive in this era aren’t the ones who use AI the most. They’re the ones who use it the most thoughtfully — who treat AI output as a first draft from a brilliant but occasionally reckless colleague, not as ground truth.

Read the code. Write the tests. Run the audits.

That’s not slower. In the long run, that’s the only way that’s actually fast.

References

- IBM X-Force — Threat Intelligence Index 2026 — ibm.com/think/insights

- Just Security — Key Trends Shaping Tech Policy in 2026 — justsecurity.org

- Tech Startups — Top Tech News March 9, 2026 — techstartups.com

- Krebs on Security — Microsoft Patch Tuesday March 2026 — krebsonsecurity.com

- Brian Jenney / Medium — The 5 Worst Tech Trends of 2025 — medium.com

- The Verge — RAM Shortage 2026 — theverge.com

- OWASP — Top 10 Security Risks — owasp.org

- Bandit — Python Security Linter — bandit.readthedocs.io

POSTS ACROSS THE NETWORK

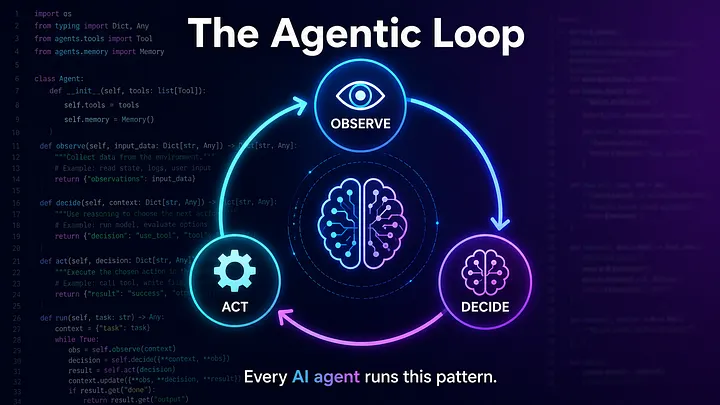

The Compounding Problem in Agentic AI Era

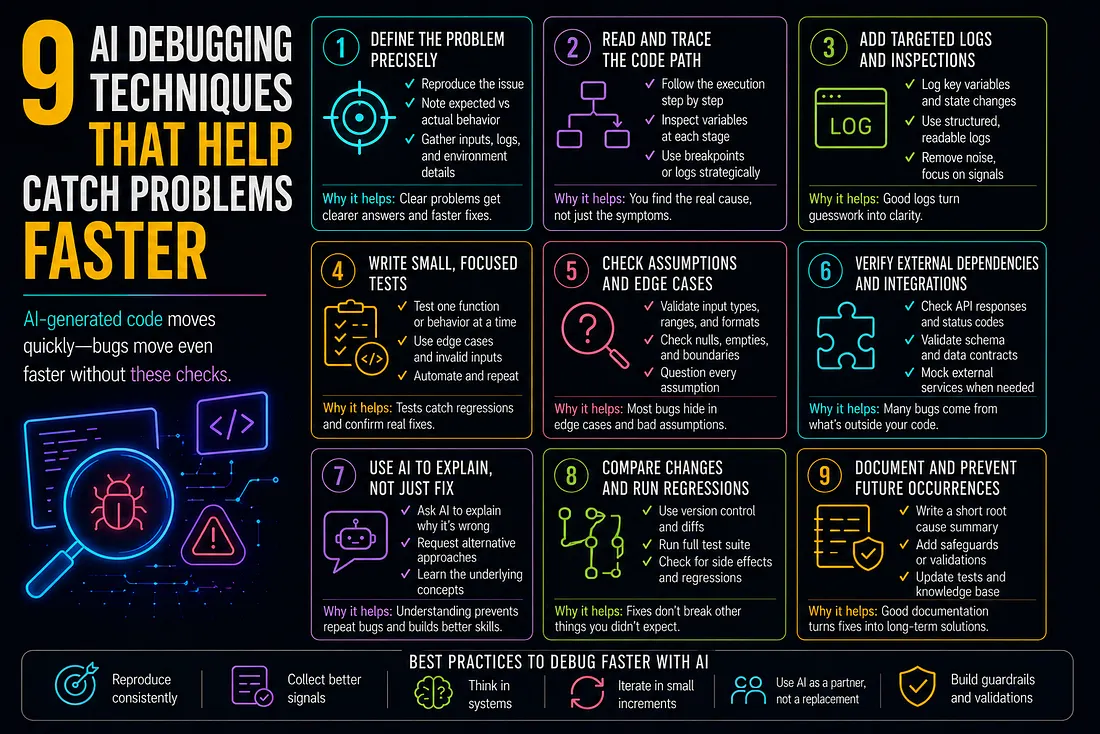

9 AI Debugging Techniques That Help Catch Problems Faster

How to Add SMS to Marketo Smart Campaigns Without Breaking Your Workflow

Best MCP Server for SEO in 2026: Guide for GEO, AEO, and SERM Experts

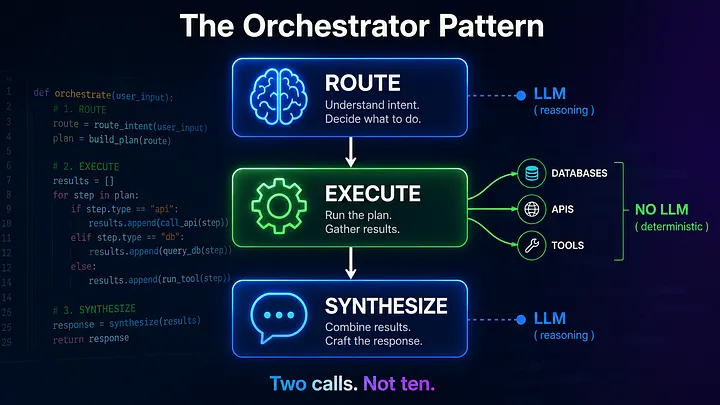

Beyond the Agentic Loop: The Orchestrator Pattern for Multi-Agent Systems