On 24 August 2001, Air Transat Flight 236 ran out of fuel over the Atlantic Ocean. The Airbus A330 glided for nineteen minutes before the crew managed to land it on a military airstrip in the Azores. All 306 people on board survived, which is something close to a miracle. The subsequent investigation found that the crew had been awake for over seventeen hours when they made the maintenance error that caused the fuel leak. Nobody set out to make a catastrophic mistake. They were simply exhausted and exhaustion had quietly eroded the quality of their judgement without their being aware of it.

This is the thing about fatigue that makes it so dangerous in high-stakes environments. It does not announce itself. The tired pilot does not feel as impaired as a drunk one, even when the cognitive deficit is comparable. The fatigued surgeon does not experience their reaction time slowing. The drowsy security analyst does not notice the threat signature they just scanned past. Fatigue is insidious precisely because it degrades the very faculties, i.e. self-awareness, judgement and attention, that would otherwise allow a person to recognise they are impaired.

Artificial intelligence (AI) is changing that. Not by making people less tired but by watching for what they cannot see in themselves.

The Scale of the Problem

Before considering the solution, the problem deserves to be stated clearly, because the numbers are stark enough that they still surprise people who encounter them for the first time.

The United States National Highway Traffic Safety Administration (NHTSA) estimates that drowsy driving causes approximately 100,000 crashes annually in the United States alone, resulting in around 1,550 deaths and 71,000 injuries. These are conservative figures, since fatigue is notoriously difficult to establish post-incident. The actual numbers are almost certainly higher.

In healthcare, a study published in the Journal of the American Medical Association found that medical residents working shifts of twenty-four hours or more made 36 percent more serious medical errors than those working shorter shifts. A separate analysis estimated that fatigue-related medical errors contribute to approximately 100,000 patient deaths in the United States every year, a figure comparable to deaths from breast cancer or AIDS in the same period.

Aviation has arguably the most rigorous fatigue management framework of any industry and it still produces incidents similar to Air Transat, like the Colgan Air Flight 3407 crash in 2009, where investigators found the Captain had been awake for nearly twenty-four hours before the flight. In another similar incident of the Korean Air Lines Flight 6316 incident in 1999, Controller fatigue contributed to a runway incursion that killed three people.

The pattern across industries is consistent. Fatigue accumulates silently, impairs without announcing itself and then, at the worst possible moment, manifests as an error that no amount of training or procedure could prevent because the person following the procedure was no longer cognitively capable of following it correctly.

What AI Actually Detects

The core challenge in fatigue detection is that tiredness is not a single, easily measurable variable. It is a constellation of subtle physiological and behavioural changes that aggregate over time and express differently in different people. AI systems address this by monitoring multiple data streams simultaneously and identifying patterns that no single sensor could capture alone.

Computer vision and facial analysis is the most visually familiar approach. Cameras, integrated into vehicle dashboards, cockpit displays or workstation monitors, continuously analyse facial expressions, eye movements and head position. The key markers are specific, which include the frequency and duration of blinks, the degree of eyelid droop, the occurrence of microsleeps (which last between one and thirty seconds and are invisible to an observer but detectable by frame-level analysis), the rate of yawning and the increasingly subtle forward head tilt that precedes full drowsiness. Volvo has built camera-based driver monitoring into its vehicles since 2020 and Mercedes-Benz’s Attention Assist system analyses steering patterns for the micro-corrections that characterise a driver fighting drowsiness.

Biometric monitoring captures the physiological dimension. Wearable devices such as smartwatches, specialised headsets and sensor-embedded clothing track heart rate variability, which decreases measurably with fatigue, along with skin conductance, body temperature and in more specialised applications, electroencephalogram (EEG) brainwave patterns. SmartCap Technologies produces a hard hat lining that reads EEG signals continuously, originally developed for mining and heavy industry. When brainwave patterns indicate the operator is approaching a microsleep, an alert is triggered before the lapse occurs. Rio Tinto deployed a version of this system across its Pilbara mining operations in Australia, reporting a significant reduction in fatigue-related incidents in the first year of deployment.

Voice analysis is less visible but increasingly important, particularly in control rooms and command environments where video monitoring may be impractical. AI systems trained on voice data can detect the subtle changes in speech cadence, articulation and pitch modulation that accompany tiredness. These changes would not be noticed by a human listener consciously but AI pattern recognition identifies them with reasonable accuracy. Air traffic control environments in particular have begun exploring voice-based fatigue monitoring precisely because controllers spend long shifts at audio stations where camera-based approaches are less applicable.

Contextual analysis is what separates a sophisticated fatigue management system from a simple alert device. An isolated data point, such as one long blink, means very little. The same data point interpreted against the context of a twelve-hour shift, a 3 AM time slot, an operator who has been working for six consecutive days and an environmental temperature that has been rising for two hours means considerably more. AI systems that integrate contextual variables alongside physiological signals generate far fewer false positives and far more actionable alerts than single-signal approaches.

Where It Is Already Working

The cases worth examining are not the pilot projects but the deployments that have been running long enough to produce measurable outcomes.

Trucking and long-haul transport. Seeing Machines, an Australian computer vision company, has deployed driver monitoring systems in commercial fleets across North America, Europe and Australia. Their platform analyses eye and head movements in real time, triggering escalating alerts, commencing with an in-cab warning and then a fleet manager notification if the driver does not respond. In a 2022 analysis of their deployed fleet data, the company reported that their system detected fatigue events in drivers who subsequently had incidents at a rate suggesting the detection had preceded the incident by a meaningful window, providing enough time for intervention. Several large Australian mining companies mandate the system for all haul truck operators working night shifts.

Aviation. Qantas, working with researchers from Monash University, has conducted trials of wearable fatigue monitoring for long-haul crew on ultra-long-range routes, including their experimental Perth-London non-stop flights. The pilots wore wrist-based actigraphy devices that tracked sleep-wake cycles in the days before departure, feeding data into fatigue risk models that informed crew scheduling and in-flight rest rotation decisions. The data collected during those trials contributed to regulatory discussions about fatigue management on routes exceeding eighteen hours.

Healthcare. The Cleveland Clinic began trialling AI-based fatigue monitoring for surgical teams in 2022, using a combination of wearable biometrics and procedure-duration tracking to flag when operating teams were approaching cognitive load thresholds associated with increased error rates. The system does not intervene in real time but generates post-shift reports that inform scheduling decisions and allow clinical leadership to identify patterns amplifying which surgeons, which procedure types and which shift configurations correlate with elevated fatigue indicators.

Defence and security. The United States Army Research Laboratory has been developing fatigue detection systems for combat vehicle operators and command post personnel for over a decade. Their most advanced work uses a combination of EEG-based headgear and eye-tracking to model operator cognitive state continuously, with the explicit goal of informing human-machine teaming decisions, essentially determining when a human operator should hand off a task to an autonomous system because their own cognitive state has degraded below the threshold required for reliable performance.

The Limits Worth Acknowledging

AI fatigue detection is real, operational and demonstrably effective in the deployments described above. It is also not a complete solution, and honesty about the limits is as important as the case for deployment.

The first limit is individual variation. Fatigue manifests differently across individuals and models trained on population-level data will perform less well on outliers, i.e. people whose physiological baselines differ significantly from the training distribution. A system calibrated to detect fatigue in a general population may miss early-stage fatigue in a person who naturally has low blink rates, or may false-positive on someone whose resting facial expression resembles fatigue markers.

The second is adaptation. There is evidence that people exposed to continuous monitoring modify their behaviour in ways that can mask genuine fatigue, either consciously or unconsciously. An operator who knows a camera is watching their eyes may override early drowsiness through deliberate effort, suppressing the signal the system is designed to detect.

The third is the intervention problem. Detection without effective intervention is limited in value. An alert that a driver is fatigued is only useful if there is somewhere safe to stop, if the organisational culture supports the driver acting on the alert without professional penalty and if the scheduling system that created the fatigue condition in the first place is reformed. Technology deployed into a broken operational context will not fix the context.

The Direction of Travel

The trajectory is clear and accelerating. Camera-based driver monitoring is now mandated by Euro NCAP safety standards for new vehicles sold in Europe from 2022 onwards. The European Union Aviation Safety Agency (EASA) has been progressively tightening fatigue risk management regulations for airline operators. Wearable biometric monitoring is moving from pilot programmes to standard operating procedure in mining, heavy industry and military contexts.

The question is no longer whether AI can detect fatigue reliably. Several years of operational deployment have answered that. The question is whether the organisations deploying these systems are prepared to act on what they detect, to restructure shifts, reform scheduling practices and accept the operational cost of pulling a fatigued operator from duty rather than absorbing the far higher cost of the error they might otherwise make.

Detection is the easy part. What comes after it requires a different kind of courage.

POSTS ACROSS THE NETWORK

What is MySQL Throughput?

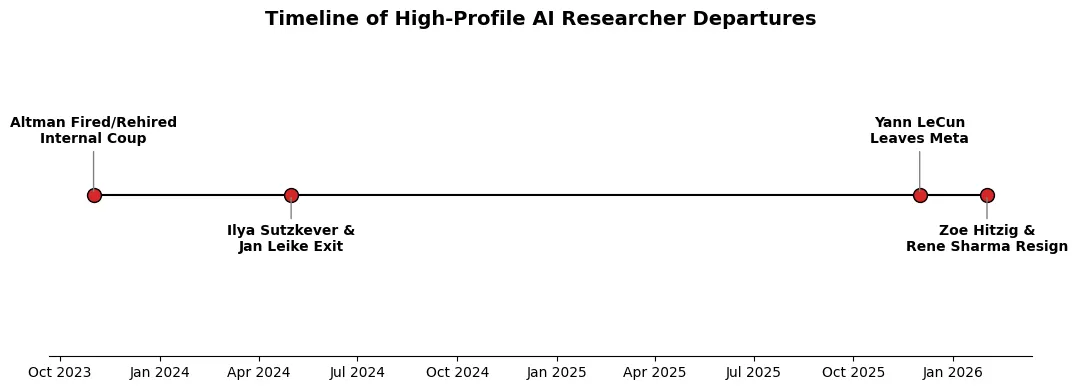

Why the Architects of AGI Are Fleeing Big Tech

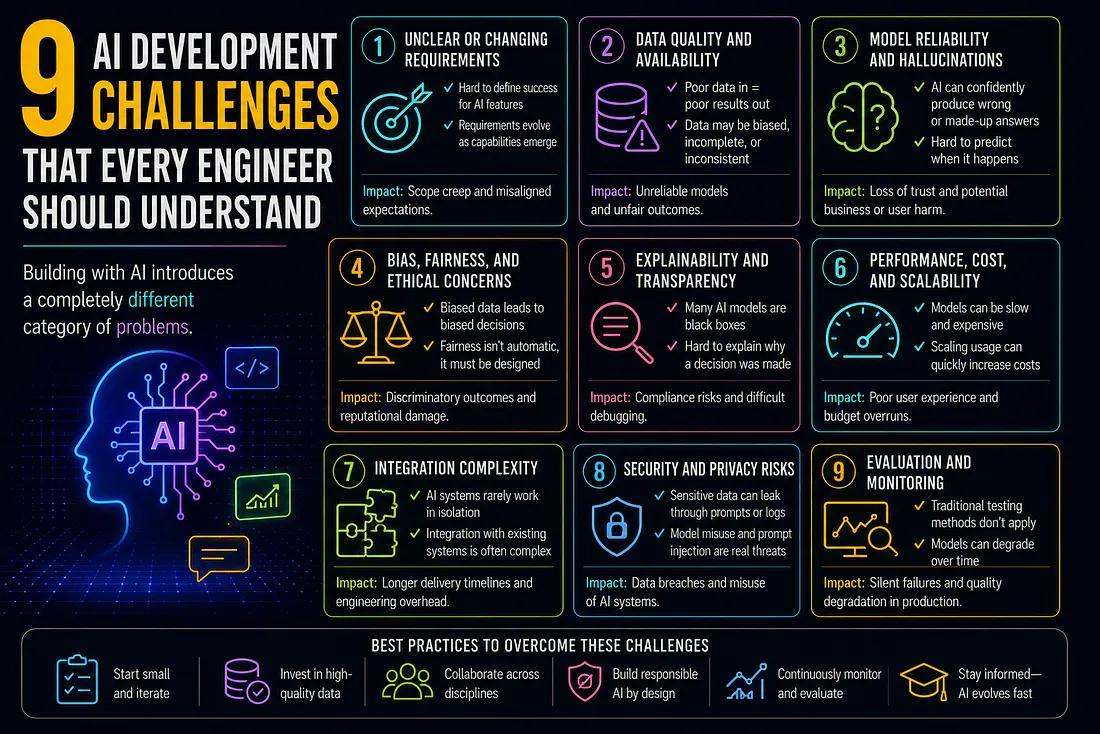

9 AI Development Challenges That Every Engineer Should Understand

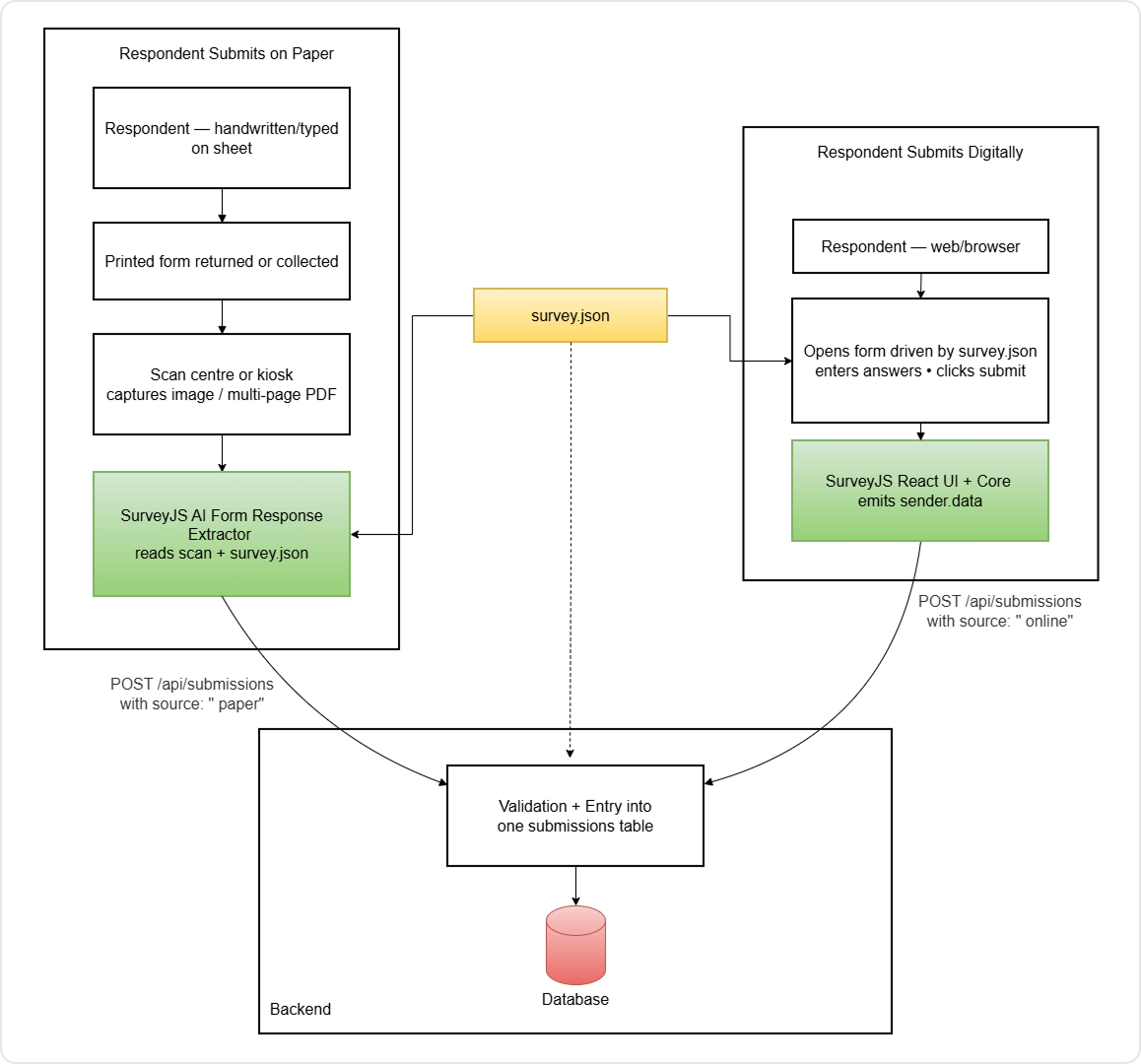

Extract Survey Responses from Paper Forms and PDFs with AI + SurveyJS