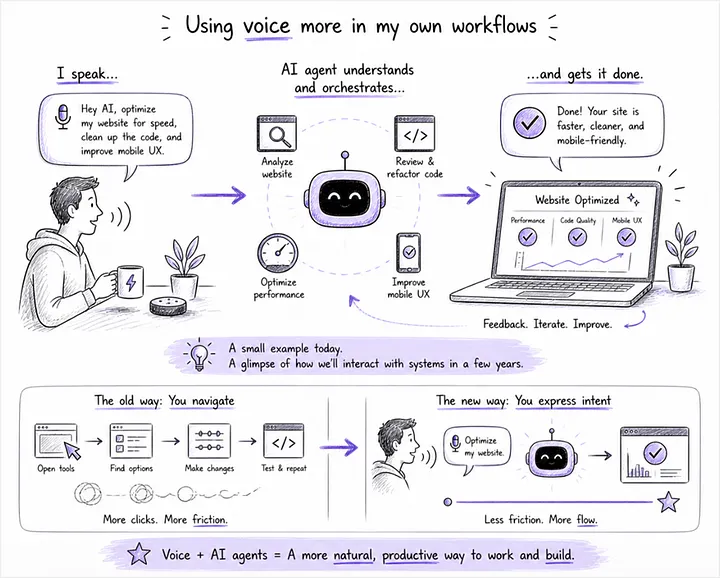

Last week, I was fighting a stubborn layout bug on my blog site www.rahulsandil.com. The old me (no coding skills at all) would have called a friend, used Google Search to look for help, and then spend a few hours, frustratingly, troubleshooting all my WordPress plugins! The new me did this in minutes. I described the problem to Claude Cowork out loud. The fix was rendered in under a minute.

No keyboard. No mouse. No touch. Just intent, expressed in plain English.

The moment is small. The shift it points to is not.

For most of computing history, the interface was the input device. AI is dissolving that assumption. The distance between I want to know something and I have the answer keeps collapsing. What’s specifically exciting is not the model getting smarter. It’s the friction getting smaller. The cloud will not power that collapse alone. On-device AI is the holy grail of the next computing paradigm.

Where are we in this shift?

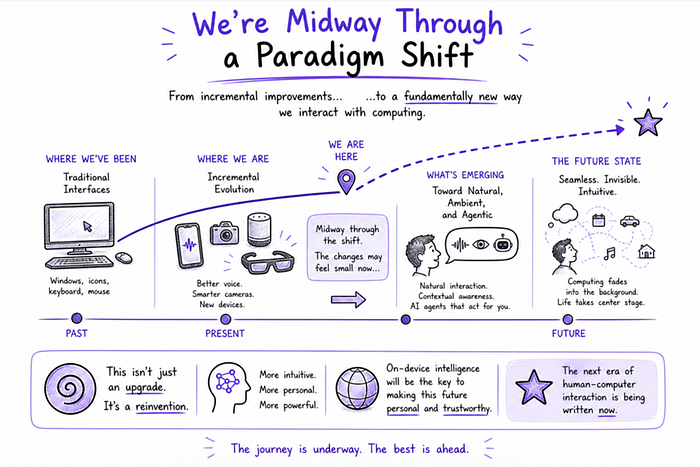

It’s tempting to talk about AI as if the new paradigm is fully formed. We’re nowhere near out of the transition. We’re midway through.

The signals are everywhere if you know where to look. Smart glasses and AR headsets are introducing new ways to interact with information. Every premium smartphone ships with voice and natural language processing baked in. Real-time translation now runs locally, no cloud trip required. Agentic workflows are emerging in early form: you describe an outcome, and your device orchestrates the steps.

In my own life, I’m using voice for things I would have typed five years ago. I’ve experimented with voice-based coding, talking to an AI agent to optimize my personal site. I’ve watched my kids treat natural language as their default input.

These look like incremental features. Collectively, they point to something far bigger. Wait, is this incremental? I don’t think so. The evolution feels like a small, step-by-step process. The destination is not.

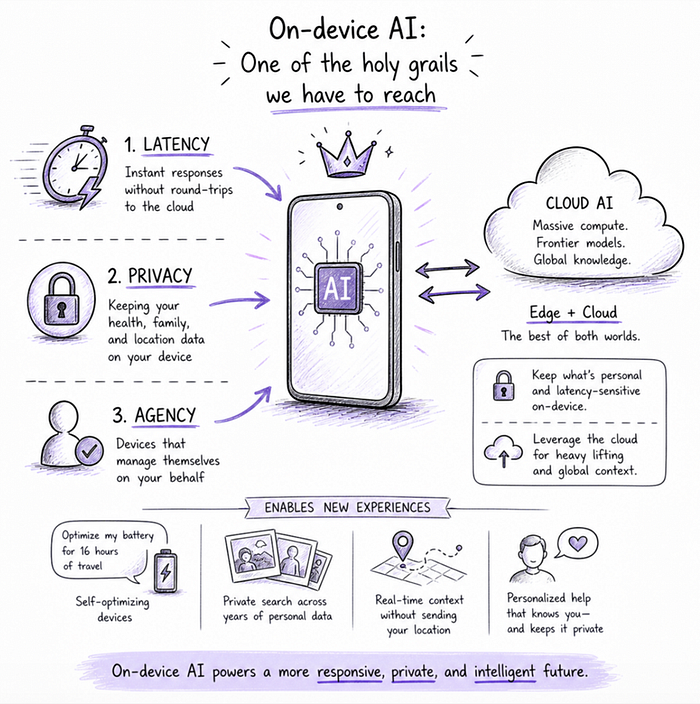

Why on-device AI is the holy grail?

If the interface is shifting, where the intelligence runs becomes a strategic question rather than a technical detail. Local intelligence solves problems the cloud cannot solve well. Three of them are foundational.

1. Latency and friction. When you ask a question or issue a command, you do not want a round-trip to a data center, a queue for compute, and a packet coming back. You want instant. You want fluid multi-step interactions. You want the device to adapt in real time as you speak and shift context. Every cloud round-trip is a small tax on the user experience. Add enough taxes, and the experience dies. (Translation: latency kills product-market fit before it kills the SLA.)

2. Privacy and safety. Some interactions should never leave the device. Health questions you’d ask your doctor. Location patterns for you and your family. Personal media spanning a decade. Tens of thousands of photos sitting in your camera roll. Do you really want your medical search history bouncing between your phone and someone else’s database? (Ouch.) On-device AI lets your device act on that data locally. The exposure surface shrinks. Trust grows.

3. Agentic device management. We’re at the start of devices that manage themselves on your behalf. Imagine saying:

- “Optimize my battery. I’m flying for 16 hours.”

- “Storage is full. Move what you can to the cloud, but keep my work files local.”

- “Find every photo of my kids at birthday parties wearing blue, going back ten years.”

- “Schedule my workouts around my travel calendar and remind me 30 minutes before each one.”

Those are not future commands. There are 2026 commands waiting for the right local agent layer. The device cannot delegate that decision-making to a cloud service that does not know your context. Core agentic functions belong on the device. Specialized work can call out to the cloud. The split matters.

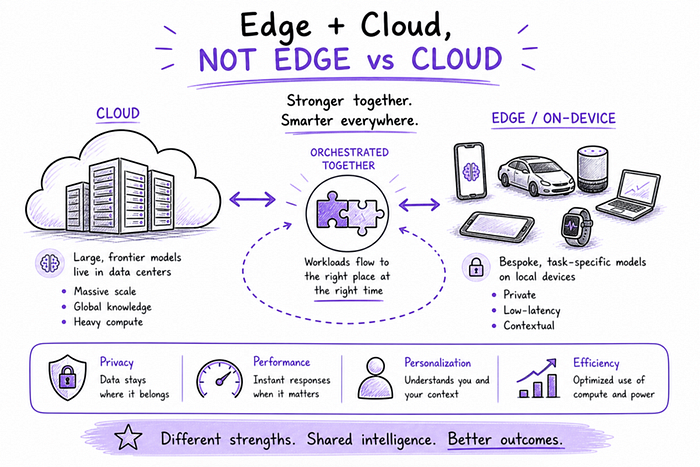

Does on-device AI replace the cloud?

No. The cloud is not going anywhere. Frontier-scale training will live in data centers for the foreseeable future. You cannot fit a trillion-parameter model on a smartphone, and you should not try.

The future is not edge versus cloud. The future is bespoke local models for common tasks, frontier models in the cloud for heavy lifting, and seamless orchestration between them.

What runs locally:

- Summarization and translation

- Personal search across your local files and media

- Contextual recommendations based on patterns the cloud should never see

- Device optimization, resource management, and self-healing

What runs in the cloud:

- Frontier reasoning that needs scale

- Global context and knowledge that updates faster than your device can

- Specialized models trained on data your device does not have

Get the orchestration right, and you keep the personal stuff personal, while still tapping global intelligence when it matters. Get it wrong, and you either bleed user trust to the cloud or starve your product of capability. Spoiler: Most product roadmaps in the market today are still betting wrong.

What does this mean for product and platform leaders?

If you are running an AI strategy for a product, platform, or device portfolio, three concrete implications follow.

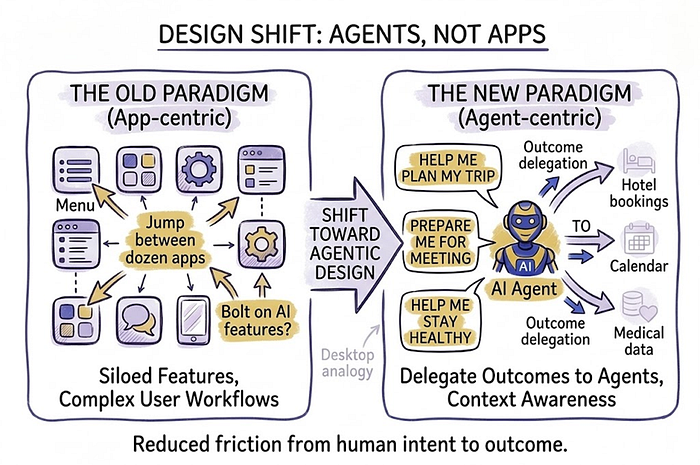

Design for natural interaction, not bolted-on features. The wrong question: What AI can we add to this product? The right question: How do we collapse friction from intent to outcome? That usually means voice as a primary control surface, context awareness baked in (location, time, prior interactions), and multi-modal input by default.

Invest in on-device capability now, even if your stack is cloud-heavy. Build the muscle for running smaller models locally. Build personalization that lives on the device. The capability to handle sensitive tasks without round-trips is no longer optional. Boards and CISOs will be asking these questions to product teams within the next 12 months. Have the answer ready.

Think in agents, not apps. The compelling experiences will not ask users to bounce between dozens of apps. They will delegate outcomes. Plan my trip. Prep me for tomorrow’s meeting. Help me stay healthy, given my history. Agents will orchestrate apps and services in the background. On-device intelligence is what makes those agents feel personal and fast enough to actually use.

We’re building the new desktop all over again

In the 1980s and 1990s, the industry collectively figured out the desktop paradigm. Windows, icons, menus, pointers. We did not see the convention forming until it was already obvious. We are doing the same thing now for AI-native computing.

From MediaTek’s vantage point, powering phones, Chromebooks, TVs, smart speakers, and the connected devices in between, this is a multi-year design problem. It is not just a hardware question. It is not a model question alone. It is a question about how humans want to interact with intelligence that lives in their pockets, on their wrists, in their cars, and on their walls.

The evolution will feel quiet, year over year. New features will keep landing. New form factors. Slightly better prompts. Marginally smarter assistants. Look back from 2036, and the shift will feel obvious in retrospect.

The leaders who shape that shift, especially at the on-device layer, will define what the next decade of computing feels like.

What’s running locally on your device today, and what’s still making the round-trip to the cloud? The honest answer is the starting point for your 2026 strategy. Let’s talk in the comments.

POSTS ACROSS THE NETWORK

Is Sawgrass SG1000 Ink the Right Choice for High-Volume Sublimation Work?

The Role of Frameless LED Displays in Store Design Strategy

When Workflows Need a Custom AI Software Development Company Before They Become Products

AWS Kiro: Moving Beyond Prompt-to-Code Development